Testing SATA Express And Why We Need Faster SSDs

by Kristian Vättö on March 13, 2014 7:00 AM EST- Posted in

- Storage

- SSDs

- Asus

- SATA

- SATA Express

During the hard drive era, the Serial ATA International Organization (SATA-IO) had no problems keeping up with the bandwidth requirements. The performance increases that new hard drives provided were always quite moderate because ultimately the speed of the hard drive was limited by its platter density and spindle speed. Given that increasing the spindle speed wasn't really a viable option for mainstream drives due to power and noise issues, increasing the platter density was left as the only source of performance improvement. Increasing density is always a tough job and it's rare that we see any sudden breakthroughs, which is why density increases have only given us small speed bumps every once in a while. Even most of today's hard drives can't fully saturate the SATA 1.5Gbps link, so it's obvious that the SATA-IO didn't have much to worry about. However, that all changed when SSDs stepped into the game.

SSDs no longer relied on rotational media for storage but used NAND, a form of non-volatile storage, instead. With NAND the performance was no longer dictated by the laws of rotational physics because we were dealing with all solid-state storage, which introduced dramatically lower latencies and opened the door for much higher throughputs, putting pressure on SATA-IO to increase the interface bandwidth. To illustrate how fast NAND really is, let's do a little calculation.

It takes 115 microseconds to read 16KB (one page) from IMFT's 20nm 128Gbit NAND. That works out to be roughly 140MB/s of throughput per die. In a 256GB SSD you would have sixteen of these, which works out to over 2.2GB/s. That's about four times the maximum bandwidth of SATA 6Gbps. This is all theoretical of course—it's one thing to dump data into a register but transferring it over an interface requires more work. However, the NAND interfaces have also caught up in the last couple of years and we are now looking at up to 400MB/s per channel (both ONFI 3.x and Toggle-Mode 2.0). With most client platforms being 8-channel designs, the potential NAND-to-controller bandwidth is up to 3.2GB/s, meaning it's no longer a bottleneck.

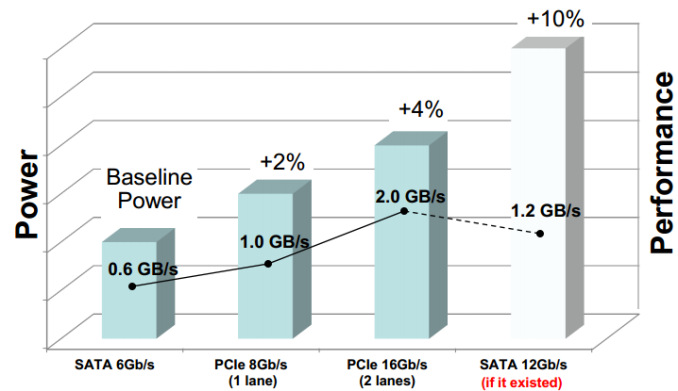

Given the speed of NAND, it's not a surprise that the SATA interface quickly became a bottleneck. When Intel finally integrated SATA 6Gbps into its chipsets in early 2011, SandForce immediately came out with its SF-2000 series controllers and said, "Hey, we are already maxing out SATA 6Gbps; give us something faster!" The SATA-IO went back to the drawing board and realized that upping the SATA interface to 12Gbps would require several years of development and the cost of such rapid development would end up being very high. Another major issue was power; increasing the SATA protocol to 12Gbps would have meant a noticeable increase in power consumption, which is never good.

Therefore the SATA-IO had to look elsewhere in order to provide a fast yet cost efficient standard in a timely matter. Due to these restrictions, it was best to look at already existing interfaces, more specifically PCI Express, to speed up the time to the market as well as cut costs.

| Serial ATA | PCI Express | |||

| 2.0 | 3.0 | 2.0 | 3.0 | |

| Link Speed | 3Gbps | 6Gbps |

8Gbps (x2) 16Gbps (x4) |

16Gbps (x2) 32Gbps (x4) |

| Effective Data Rate | ~275MBps | ~560MBps |

~780MBps ~1560MBps |

~1560MBps ~3120MBps (?) |

PCI Express makes a ton of sense. It's already integrated into all major platforms and thanks to scalability it offers the room for future bandwidth increases when needed. In fact, PCIe is already widely used in the high-end enterprise SSD market because the SATA/SAS interface was never enough to satisfy the enterprise performance needs in the first place.

Even a PCIe 2.0 x2 link offers about a 40% increase in maximum throughput over SATA 6Gbps. Like most interfaces, PCIe 2.0 isn't 100% efficient and based on our internal tests the bandwidth efficiency is around 78-79%, so in the real world you should expect to get ~780MB/s out of a PCIe 2.0 x2 link, but remember that SATA 6Gbps isn't 100% either (around 515MB/s is the typical maximum we see). The currently available PCIe SSD controller designs are all 2.0 based but we should start to see some PCIe 3.0 drives next year. We don't have efficiency numbers for 3.0 yet but I would expect to see nearly twice the bandwidth of 2.0, making +1GB/s a norm.

But what exactly is SATA Express? Hop on to next page to read more!

131 Comments

View All Comments

R0H1T - Thursday, March 13, 2014 - link

"This is actually the same motherboard as our 2014 SSD testbed but with added SATAe functionality."Does this mean you're going to test next gen SSD's with this(SATAe) & if so perhaps anytime during the current 2014 calendar year?

ddriver - Thursday, March 13, 2014 - link

So why not use 2 lane PCIE for the SSD instead - it does look like it uses less power and has higher bandwidth than SATAE?DanNeely - Thursday, March 13, 2014 - link

Mini ITX with a discrete GPU (or any other card) or mATX with dual GPU setups either don't have anywhere to put a PCIe SSD or don't have anywhere good to put one.SirKnobsworth - Saturday, March 15, 2014 - link

That's what M.2 is for.Bigman397 - Friday, April 4, 2014 - link

Which is a much better solution than retrofitting controllers and protocols meant for rotational media.Kristian Vättö - Thursday, March 13, 2014 - link

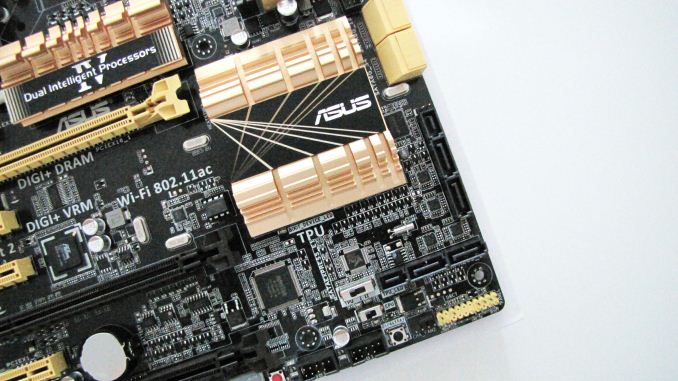

The motherboard in our 2014 testbed is the normal Z87 Deluxe without SATAe. There aren't any official SATAe products yet so we're not sure how we'll test those but the ASUS board is certainly an option.MrPoletski - Thursday, March 13, 2014 - link

I wonder what ridiculous speed SSD's we are going to start seeing with this tech. Quite exciting really.nathanddrews - Friday, March 14, 2014 - link

The Future!http://www.tomsitpro.com/articles/intel-silicon-ph...

thevoiceofreason - Thursday, March 13, 2014 - link

"because after all we are using cabling that should add latency"Why would you assume that?

DiHydro - Thursday, March 13, 2014 - link

When talking about one nanosecond signals, a charge will travel approximately 30 cm or 1 foot. If you add length onto a signal path, it will delay your transmission speed.