Imagination's PowerVR Rogue Architecture Explored

by Ryan Smith on February 24, 2014 3:00 AM EST- Posted in

- GPUs

- Imagination Technologies

- PowerVR

- PowerVR Series6

- SoCs

When it comes to our coverage of SoCs, one aspect we’ve been trying to improve on for some time now is our coverage and understanding of the GPU portion of those SoCs. In the PC space we’re fortunate that there are just three major players – Intel, NVIDIA, and AMD – and that all three of them have over the years learned how to become very open and forthcoming about their GPU architectures. As a result we’ve had a level of access that has allowed us to better understand PC GPUs in a way that in earlier times simply wasn’t possible.

In the SoC space however we haven’t been so fortunate. Our understanding of most SoC GPU architectures has not been nearly as deep due to the fact that SoC GPU designers have been less willing to come forward with public details about their architectures and how those architectures have evolved over the years. And this has been for what’s arguably a good reason – unlike the PC GPU space, where only 2 of the 3 players compete in either the iGPU or dGPU markets, in the SoC GPU space there are no fewer than 7 players, all of whom are competing in one manner or another: NVIDIA, Imagination Technologies, Intel, ARM, Qualcomm, Broadcom, and Vivante.

Some of these players use their designs internally while others license out their designs as IP for inclusion in 3rd party SoCs, but all these players are in a much more competitive market that is in a younger place in its life. All the while SoC GPU development still happens at a relatively quick pace (by GPU standards), leading to similarly quick turnarounds between GPU generations as GPU complexity has not yet stretched out development to a 3-4 year process. As a result of SoC GPUs still being a young and highly competitive market, it’s a foregone conclusion that there is still a period of consolidation ahead of us – not unlike what has happened to SoC integrators such as TI – which provides all the more reason for SoC GPU players to be conservative about providing public details about their architectures.

With that said, over the years we have made some progress in getting access to the technical details, due in large part to the existing openness policies of NVIDIA and Intel. Nevertheless, as two of the smaller players in the mobile GPU space this still leaves us with few details on the architectures behind the majority of SoC GPUs. We still want more.

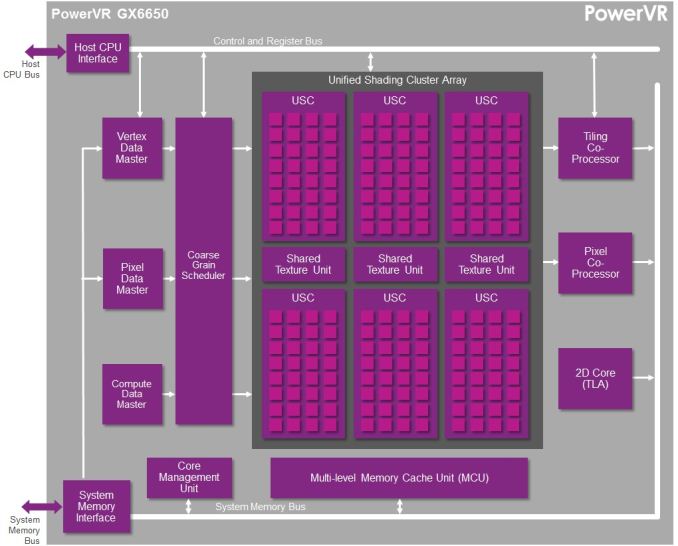

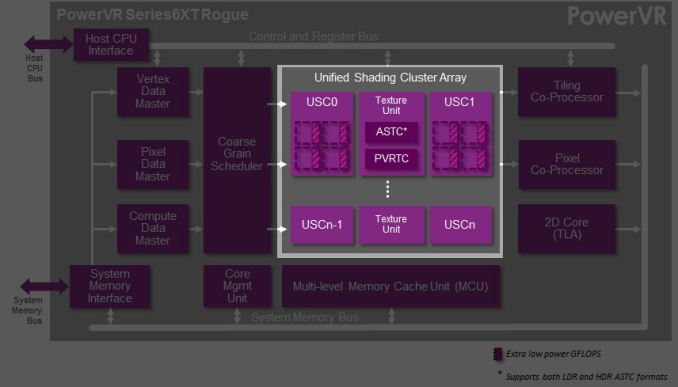

This brings us to today. In what should prove to be an extremely eventful and important day for our coverage and understanding of SoC GPUs, we’d like to welcome Imagination Technologies to the “open architecture” table. Imagination chosen to share more details about the inner workings of their Rogue Series 6 and Series 6XT architectures, thereby giving us our first in-depth look at the architecture that’s powering a number of high-end products (not the least of which is all of Apple’s current-gen products) and descended from some of the most widely used SoC GPU designs of all time.

Now Imagination is not going to be sharing everything with us today. The bulk of the details Imagination is making available relate to their Unified Shading Cluster (USC) shading block, the heart of the Series 6/6XT GPUs. They aren’t discussing other aspects of their designs such as their geometry processors, cache structure, or Tile Based Deferred Rendering system – the company’s secret sauce and most potent weapon for SoC efficiency – but hopefully one day we’ll get there. In the meantime we will have our hands full just taking our first look at the Series 6/6XT USCs.

Finally, before we begin we’d like to thank Imagination for giving us this opportunity to evaluate their architecture in such great detail. We’ve been pushing for this for quite some time, so we’re pleased that this is coming to pass.

Imagination is publishing a pair of blogs and pseudo whitepapers on their website today: Graphics cores: trying to compare apples to apples, and PowerVR GX6650: redefining performance in mobile with 192 cores. Along with this they have also been answering many of our deepest technical questions, so we should have a good handle on the Rogue USC. So with that in mind, let’s dive in.

95 Comments

View All Comments

jjj - Monday, February 24, 2014 - link

Far from ideal timing with so many news around, guess i'll have to read it after MWC.rpg1966 - Monday, February 24, 2014 - link

Thank you for sharing.Mondozai - Monday, February 24, 2014 - link

The most important part is what Anand highlighted from this walkthrough; namely that the Rogue series has a chip that is on equal balance if not even stronger than Tegra K1.Poor Nvidia.

Sabresiberian - Monday, February 24, 2014 - link

That remains to be seen - but it does appear to be competitive at this point. We also don't know what the entire Denver+K1 package will do.What kind of surprises me about K1 though is that Maxwell has already been released for the desktop. I would think "M1" (to guess at a name) would be the architecture to build on in the next year.

dragonsqrrl - Monday, February 24, 2014 - link

Uhhh no it doesn't. The article failed to mention this important piece of information, but you realize the 6XT series probably won't come to market before 2015 right? Likely 2H 2015 according to an earlier article published here on Anandtech.grahaman27 - Monday, February 24, 2014 - link

Interesting. Which article mentioned that? Would you mind linking me to it?I can't find an expected release date for this chip anywhere.

dragonsqrrl - Tuesday, February 25, 2014 - link

That's because there is none. It was an estimate given by Ryan Smith based on prior Imagination GPU announcements, and the time it usually takes chipmakers to integrate the design and bring a device to market."Finally, while Imagination doesn’t provide a timeframe for consumer availability (since they only sell designs to chipmakers), based on the amount of time needed to integrate these designs into new products and then get those products in the hands of consumers, we should be looking at a timetable similar to the original Series6 designs. In which case Series6XT equipped SoCs would start appearing in 2015, likely in the latter half."

http://www.anandtech.com/show/7629/imagination-tec...

michael2k - Tuesday, February 25, 2014 - link

Really? You don't think their biggest customer, Apple, which has shown the ability to beat an entire industry to market by almost a year (64 bit ARMv8) and one of the first PVR6 customers to market as well? Anand though it would be 2014 when PVR6 would show up to market, and the A9600 that was supposed to show up in 2013 never did (Apple's A7 did though!)So why do you rule out the real possibility that the Apple A8 would ship with a 6XT this year?

dragonsqrrl - Tuesday, February 25, 2014 - link

I don't know, ask Ryan Smith.My theory for the ~18 month estimate is that it's about how long Apple took to integrate and bring their current series 6 GPU to market in the A7. I suppose if any company had the resources to accelerate that schedule, it would be Apple. But then the question becomes why and does it make sense? There will be faster Series 6 SKU's available for the A8 in the interim.

stingerman - Wednesday, February 26, 2014 - link

But don't forget, Apple has been involved in this design long before Imagination's public reveal. In fact, Apple informed Imaginations design with their real world experience and their own needs. I always expect Apple to get a 6 to 12 month lead. And, Apple has shown themselves to put a very high value on their SoC GPU leadership.