Airy3D's DepthIQ: A Cheap Camera Depth Sensing Solution

by Andrei Frumusanu on June 4, 2020 10:00 AM EST- Posted in

- Mobile

- Camera Sensors

- Airy3D

- Depth Sensing

Over the last few years, we’ve seen a lot of new technologies in the mobile market trying to address the problem of attempting to gather depth information with a camera system. There’s been various solutions by different companies, ranging from IR dot-projectors and IR cameras (structured light), stereoscopic camera systems, to the latest more modern time-of-flight special dedicated sensors. One big issue of these various implementations has been the fact that they’re all using quite exotic hardware solutions that can significantly increase the bill of materials of a device, as well as influence its industrial design choices.

Airy3D is a smaller new company that has been to date only active on the software front, providing various imaging solutions to the market. The company is now ready to transition to a hybrid business model, describing themselves as a hardware-enabled software company.

The company’s main product to fame right now is the “DepthIQ” platform – a hardware-software solution that promises to enable high-quality depth sensing to single cameras at a much cheaper cost than any other alternative.

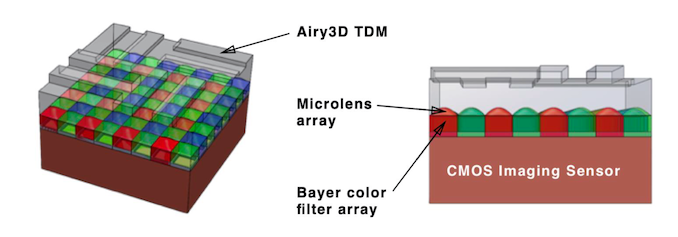

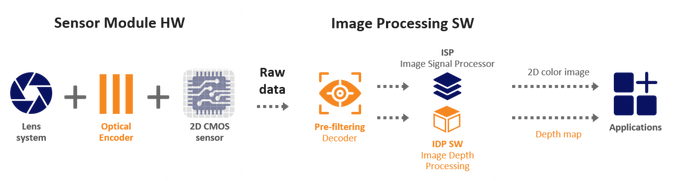

At the heart of Airy3D’s innovation is an added piece of hardware to existing sensors in the market, called a transmissive diffraction mask, or TDM. This TDM is an added transmissive layer manufactured on top of the sensor, shaped with a specific profile pattern, that is able to encode the phase and direction of light that is then captured by the sensor.

The TDM in essence creates a diffraction pattern (Talbot effect) onto the resulting picture, that differs based on the distance of a captured object. The neat thing that Airy3D is able to do here, is employ advanced software algorithms that are able to decode this pattern, and transform the raw 2D image capture into a 3D depth map as well as a 2D image with the diffraction pattern compensated out.

Airy3D’s role in the manufacturing chain of a DepthIQ enabled camera module is designing the TDM grating which they then license out and cooperate with sensor manufacturers, who then integrate it into their sensors during production. In essence, the company would be partnering with any of the big sensor vendors such as Sony Semiconductor, Samsung LSI or Omnivision in order to produce a complete solution.

I was curious whether the company had any limits in terms of the resolution the TDM can be manufactured at, since many of today’s camera sensors employ 0.8µm pixel pitches and we’re even starting to see 0.7µm sensors coming to market. The company sees no issues in scaling the TDM grating down to 0.5µm – so there’s still a ton of leeway for future sensor generations for years to come.

Adding a transmissive layer on top of the sensor naturally doesn’t come for free, and there is a loss in sharpness. The company is quoting MTF sharpness reductions of around 3.5%, as well as a reduction of the sensitivity of the sensor due to TDM, in the range of 3-5% across the spectral range.

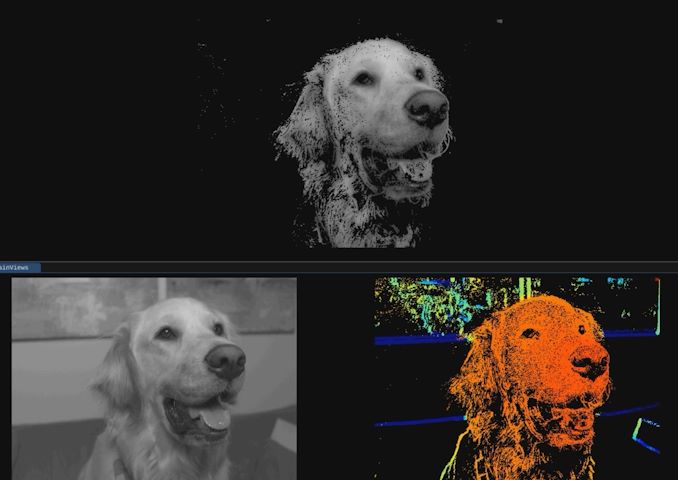

Camera samples without, and with the TDM

The company shared with us some samples of a camera system using the same sensor, once without the TDM, and once with the TDM employed. Both pictures are using the exact same exposure and ISO settings. In terms of sharpness, I wouldn’t say there’s major immediately noticeable differences, but we do see that the darker image with the TDM employed, a result of the reduced QE efficiency of the sensor.

The software processing is said to be comparatively light-weight compared to other depth-sensor solutions, and can be done on a CPU, GPU, DSP or even small FPGA.

The resulting depth discernation the solution is able to achieve from a single image capture is quite astounding – and there’s essentially no limit to the resolution that can be achieved as it scales with the sensor resolution.

More complex depth sensing solutions can add anywhere from $15 to $30 to the BOM of a device. Airy3D sees this technology to see the biggest adoption in the low- and mid-range, as usually the higher end is able to absorb the cost of other solutions, as also unlikely to be willing to make the make any sacrifice in image quality on the main camera sensors. A cheaper device for example would be able to have depth-sensing face ID unlocking with just a simple front camera sensor, which would represent notable cost savings.

Airy3D says they have customers lined up for the technology, and see a lot of potential for it in the future. It’s an extremely interesting way to achieve depth sensing given it’s a passive hardware solution that integrates into an existing camera sensor.

17 Comments

View All Comments

wishgranter - Thursday, June 4, 2020 - link

Interesting technology...mkozakewich - Wednesday, June 10, 2020 - link

There is no way that's 3.5%. More like 35%!evacdesilets - Sunday, June 14, 2020 - link

Make money online from home extra cash more than $18k to $21k. Start getting paid every month Thousands Dollars online. I have received $26K in this month by just working online from home in my part time.every person easily do this job by just open this link and follow details on this page to get started... WWW.iⅭash68.ⅭOⅯedzieba - Thursday, June 4, 2020 - link

They may be a little late to be competitive for upcoming devices: while compared to other structured-light or RToF sensors this is attractive, the new game in town is DToF sensors, the first commercial one quietly being plopped into the recent iPad Pro.RToF like that used in the Kinect 2 relies of relative phase difference between pixel pairs (along with a synchronised illuminator) to watch incoming light shift in and out or relative sync (via relative brightness using phase offset). That gives you a relative depth value vs. the wavelength as it propagates out, but does not give you any absolute depth values. Like with relative-offset structured light approaches (e.g. Kinect 1) there is still a modelling and estimation stage required to turn that into an estimated depth map. Same with this sensor.

DToF, or Direct Time of Flight (AKA Flash LIDAR) is a different beast. Here, a pulse is sent out and each pixel measures the /actual true flight time/ between emission and reception. This not only gives you absolute depth values, but lets you do some VERY cool tricks. For example, you can gate your sensor to pick up the 'furthest' value for each pixel, which allows you to see through dense fog or dust (as the early reflections from intervening particles are discarded), or measure the front and back surfaces of translucent objects. DToF has previously been the domain of rather expensive sensors used for geospatial mapping (those fancy point-clouds taken by drones of forests revealing the forest floor straight through the treetops are produced by pulse LIDAR).

michael2k - Thursday, June 4, 2020 - link

Um, the iPad Pro sensor is remarkably low resolution, when compared to the DepthIQ sensor. It's design is more suited for AR and room scale, and is not even close to per pixel, as you imagine it can be.close - Friday, June 5, 2020 - link

Indeed, this is a good visual comparison between the iPad Pro sensor and even FaceID: https://image-sensors-world.blogspot.com/2020/03/t...edzieba - Friday, June 5, 2020 - link

There's a lot more to a depth sensors utility than just resolution.https://www.i-micronews.com/with-the-apple-ipad-li...

michael2k - Friday, June 5, 2020 - link

Of course, but your original assertion was that you could find per-pixel depth information when the current implementation only gives you depth information for the center pixel in 20 or so radius.BedfordTim - Friday, June 5, 2020 - link

Interesting. Another site had linked Apple's patent to an ST MEMS device.mode_13h - Friday, June 5, 2020 - link

The main problems with any structured-light or ToF solution is they don't contend well with daylight, and have limited range. Passive solutions, like stereo or this, have no inherent range limit and work best in good lighting.