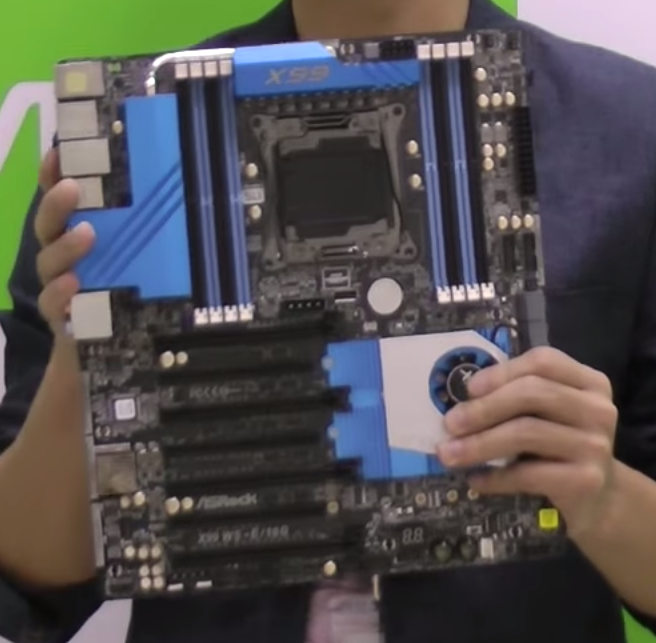

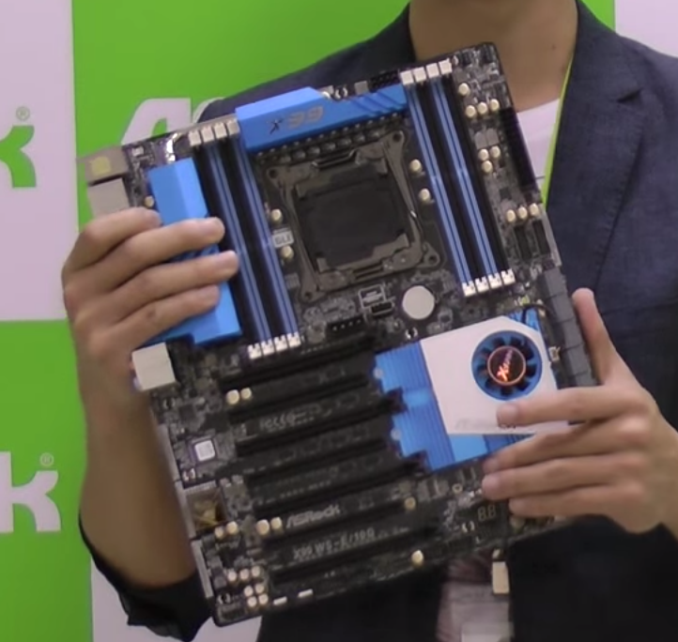

ASRock Announces X99 WS-E/10G: Dual 10GBase-T and Quad x16

by Ian Cutress on November 24, 2014 4:06 AM EST- Posted in

- Motherboards

- ASRock

- 10G Ethernet

- X99

- LGA2011-3

- 10GBase-T

- PLX8747

Edit: Read our full review here: http://www.anandtech.com/show/8781/

Regular readers of my twitter feed might have noticed that over the past 12/24 months, I lamented the lack of 10 gigabit Ethernet connectors on any motherboard. My particular gripe was the lack of 10GBase-T, the standard which can be easily integrated into the home. Despite my wishes, there are several main barriers to introducing this technology. Firstly is the cost, whereby a 10GBase-T Ethernet card costs $400-$800 depending on your location (using the Intel X520-T2), followed by the power consumption which requires either an active cooler or a passive plus good airflow to shift up to 14W. The bandwidth can be as important (PCIe 2.1 x8 for the X540-BT2, but can work in PCIe 3.0 x8 or x4 mode), but also it is limited to those who need faster internal networking routing. When all these factors are added together, it does not make for an easy addition to a motherboard. But step forward ASRock.

The concept of the X99 WS-E/10G is simple. This is a workstation class motherboard aimed at prosumers. This is where 10GBase-T makes most sense after all, at the users that have sufficient funds to purchase a minimum $800 Netgear 10GBase-T switch and measure their internal networking upgrades in terms of hundreds of dollars per port, rather than cents per port. The workstation motherboard is also designed to support server operating systems, and is low profile in the rear for fitting into 1U chassis, similar to other ASRock WS motherboards.

In order to deal with the heat from the Intel X540-BT2 chip being used, the extended XXL heatsink is connected to the top heatsink on board, with the final chipset heatsink using an active fan. This is because this chipset heatsink arrangement also has to cool two PLX 8747 chips which enable the x16/x16/x16/x16 functionality. If a prosumer has enough single slot cards, this can extend into x16/x8/x8/x8/x8/x8/x8 if needed. Extra PCIe power is provided via two molex ports above and below the PCIe connectors.

Aside from the X540-BT2 chip supplying dual 10GBase-T ports, ASRock has dual Intel I210-AT Ethernet ports also for a total of four. All four can be teamed with a suitable switch in play. The key point to note here despite ASRock’s video explaining the technology, and which sounds perfectly simple to anyone in networking, is that this does not increase your internet speed, only the internal home/office network speed.

The rest of the motherboard is filled with ten SATA 6 Gbps ports plus another two from a controller, with also SATA Express support and M.2 support. ASRock’s video suggests this is PCIe 2.0 x4, although their image lacks the Turbo M.2 x4 designation and the chipset would not have enough lanes, and as such it is probably M.2 x2 shared with the SATAe. Audio is provided by an improved Realtek ALC1150 codec solution, and in the middle of the board is a USB 2.0 Type-A slot sticking out of the motherboard, for dongles or easy OS installation out of the case. There are eight USB 3.0 ports on the board as well.

Like the X99 Extreme11, this motherboard is going to come in very expensive. Dual PLX 8747 chips and an Intel X540-BT2 chip on their own would put it past most X99 motherboards on the market. To a certain extent we could consider the Extreme11 design, remove the LSI chip from it and add the X540-BT2, which still means it will probably be $200-$300 more than the Extreme11. Mark this one down at around $800-$900 as a rough guess, with an estimated release date in December.

Thinking out loud for a moment: 10GBase-T is being used here because it is a prosumer feature, and prosumers already want a lot of other features, hence the combination and high price overall. The moment 10G is added to a basic motherboard for example, using a H97/Z97 (and reduces the PCIe 3.0 x16 down to x8), a $100 board becomes $400+ and beyond the cost of any other Z97 motherboard. Ultimately if 10GBase-T were to become a mainstream feature, the chip needs to come down in price.

_thumb.jpg)

50 Comments

View All Comments

Pork@III - Monday, November 24, 2014 - link

Most ISP's in USA and some other countries are both very greedy and very skinflint like Apple. Therefore client access that is both one of the most expensive and slowest and / or restricted by volume in the world.DanNeely - Monday, November 24, 2014 - link

Or buy a modem instead of renting it. A month ago I upgraded from from 15MB docsis 2 to 75MB docsis 3 cable. My shiny new Moto Surfboard has a gigabit port (needed to support its max 120/133mbps data rate). I just need to replace my old 100mb/N router now; turns out it bottlenecks at ~50mbps. That hasn't been an issue for internal traffic, all my wired traffic runs over a gigabit bit switch (wanted more than 4 ports and at the time I bought it there was still a significant price premium for gigabit routers), but replacing it's going to be my next medium sized tech purchase. (I was hoping ac routers would get cheaper first; but can't see buying a gigabit-N model now.)DCide - Monday, November 24, 2014 - link

Not really true Jeff - AT&T is the only one of the top 5 ISPs not offering 100Mbps+ service now (unless you count their very limited availability of Gigabit service). Fast ethernet tops out around 80 Mbps (or maybe 90 if you're fortunate) in real-world usage. An ISP advertizing 100Mbps service, such as Cox, typically delivers slightly over 120Mbps. So 100Base-T isn't "going to become a bottleneck" for ISPs, it already is.Hydrocron - Monday, November 24, 2014 - link

Almost every modem or gateway that my company is putting in to the field along with most of our competitors all support Gb on the LAN ports and most of them have Gb on the WAN port as well. Even if the higher speed offerings are not available in your neighborhood you can still take advantage of the Gb LAN ports for in home wiring.The next big bottleneck that you will see change over the next year or so will be the built in wifi migrating from single channel 2.4ghz 802.11n to 2.4 and 5 ghz MIMO 802.11ac now that the chipsets have dropped in price and are spec'd for our future Gateways and modems.

Ammaross - Wednesday, November 26, 2014 - link

The problem isn't consumer equipment or die-shrink, power requirements, etc. It's PCIe lanes. Consumer-class Intel chips have very few PCIe lanes left over once you give x16 to a video card. Give consumer-class chips the 40 lanes of the 2011-socket chips and we'll have enough internal bandwidth (that's PCIe lane to CPU/mem bandwidth) to make 10gig ethernet useful.DanNeely - Wednesday, November 26, 2014 - link

Skylake will go a long way to mitigating that problem. In addtion to upgrading the chipset to PCIe 3.0, It's adding 10 more flexible IO ports (can be configured as a PCIe lane, Sata port, or USB3 port). That should give enough extra capacity to add a 10GB port to a consumer board without needing a PLX or cutting into GPU resources.name99 - Monday, November 24, 2014 - link

Your overall theme is correct, the details are not.802.3ab (gig ethernet over twisted pair, ie what most of us care about) was ratified in 1999, and Apple was shipping machines with Gig ethernet in 2001. Moreover these machines were laptops --- gigE did not require outrageous amounts of power, but it DID require what a few years earlier might have been considered outrageous amounts of signal processing, unlike the fairly "obvious" (hah!) signal processing required for 100M twisted pair ethernet.

Daniel Egger - Monday, November 24, 2014 - link

Yes, it is impractical but not really expensive if you can do without a switch or need only few ports and can go SFP+. Just not sure why they decided to integrate 10GBase-T PHYs instead of SFP+ ports and be done with it; allows for a ton more flexbility and is much cheaper.The problem is really that it's *very* hard to really use the 10GBit/s. While using 1Gbit/s is no problem anymore properly saturating a 10GBit/s still tricky; we tried that with our shiny new server hardware and failed to get more than with bonding so we decided not fork out a big chunk of money for a switch with enough SFP+ ports.

DCide - Monday, November 24, 2014 - link

Daniel, I would think copying files between two PCs with SSDs should be enough to make 10GBase-T worthwhile; besides, doesn't the "bonding" you're using still limit a single connection to the 1GBit speed? So when you say "*very* hard to really use" aren't you assuming mainstream usage models? I ask because you obviously have experience with it, but it sounds like it's within a certain assumed context. I also wonder why it's necessary to saturate the network in order to believe you're getting the value out of it; I'm accustomed to environments where you want to avoid saturating most computer resources.The bonding may be more cost effective and practical for a typical corporate data center, but for copying 4K video files around I'd rather have 10GBase-T now. And obviously this need will only grow as time goes on.

Daniel Egger - Tuesday, November 25, 2014 - link

> Daniel, I would think copying files between two PCs with SSDs should be enough to make 10GBase-T worthwhileNope, because the underlying protocols (NFS, SMB...) down to TCP/UDP and are out of the box not prepared to push data that fast.

> besides, doesn't the "bonding" you're using still limit a single connection to the 1GBit speed?

Coincidentally it does here but that's not necessarily the case. But again the point is: it's more than unlikely to saturate a 1000Base-T let alone a 10GBase-T link with a single connection except for synthetical benchmarks.

> So when you say "*very* hard to really use" aren't you assuming mainstream usage models?

I guess you could call our usage mainstream however most users would probably disagree at a hardware value of around 15k€. ;) The use case is a multi-server VM hosting (only for company use) setup, we do have 3 2-socket Xeon servers and a NAS. Most of the images used by the VMs are on the NAS served by NFS. 2 of the servers do have 10GBase capable SFP+ ports so just for tests we connected them back to back and ran some benchmarks (exporting storage on an SSD to the other server for instance), tweaked a little and reran them but the results we're not decent enough to warrant a multi k € upgrade of the switch and more importantly the NAS which can not even saturate the 1000Base-T links.

> I also wonder why it's necessary to saturate the network in order to believe you're getting the value out of it

Because the only important KPI is the QoS. Improving the bandwidth without having a bottleneck (as indicated by the saturation) does not result in a better QoS, so basically it'd be wasted money and no one likes that. ;)

> The bonding may be more cost effective and practical for a typical corporate data center, but for copying 4K video files around I'd rather have 10GBase-T

Chances are you will be *really* disappointed by the performance you might get out of that. If you want to copy files you'd be much better off looking at a eSATA or Thunderbolt solution...