Intel SSD DC S3500 Review (480GB): Part 1

by Anand Lal Shimpi on June 11, 2013 6:10 PM EST- Posted in

- Storage

- IT Computing

- SSDs

- Intel

- Datacenter

- Enterprise

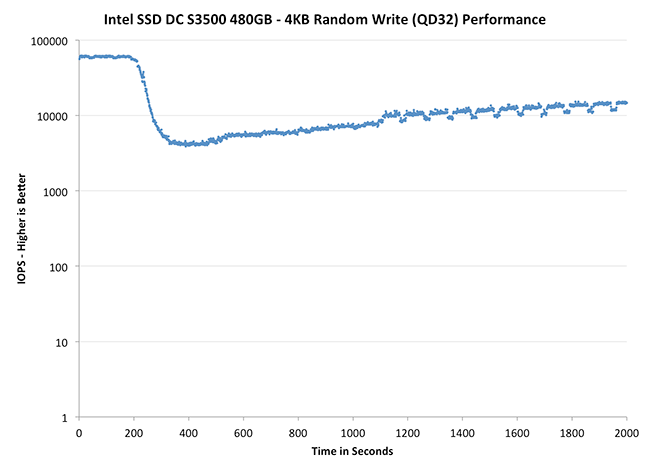

Performance Consistency

In our Intel SSD DC S3700 review I introduced a new method of characterizing performance: looking at the latency of individual operations over time. The S3700 promised a level of performance consistency that was unmatched in the industry, and as a result needed some additional testing to show that. The reason we don't have consistent IO latency with SSDs is because inevitably all controllers have to do some amount of defragmentation or garbage collection in order to continue operating at high speeds. When and how an SSD decides to run its defrag and cleanup routines directly impacts the user experience. Frequent (borderline aggressive) cleanup generally results in more stable performance, while delaying that can result in higher peak performance at the expense of much lower worst case performance. The graphs below tell us a lot about the architecture of these SSDs and how they handle internal defragmentation.

To generate the data below I took a freshly secure erased SSD and filled it with sequential data. This ensures that all user accessible LBAs have data associated with them. Next I kicked off a 4KB random write workload across all LBAs at a queue depth of 32 using incompressible data. I ran the test for just over half an hour, no where near what we run our steady state tests for but enough to give me a good look at drive behavior once all spare area filled up.

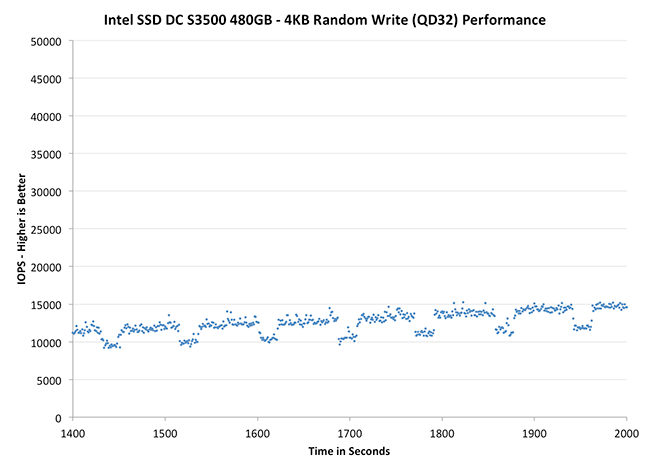

I recorded instantaneous IOPS every second for the duration of the test. I then plotted IOPS vs. time and generated the scatter plots below. Each set of graphs features the same scale. The first two sets use a log scale for easy comparison, while the last set of graphs uses a linear scale that tops out at 50K IOPS for better visualization of differences between drives.

The high level testing methodology remains unchanged from our S3700 review. Unlike in previous reviews however, I did vary the percentage of the drive that I filled/tested depending on the amount of spare area I was trying to simulate. The buttons are labeled with the advertised user capacity had the SSD vendor decided to use that specific amount of spare area. If you want to replicate this on your own all you need to do is create a partition smaller than the total capacity of the drive and leave the remaining space unused to simulate a larger amount of spare area. The partitioning step isn't absolutely necessary in every case but it's an easy way to make sure you never exceed your allocated spare area. It's a good idea to do this from the start (e.g. secure erase, partition, then install Windows), but if you are working backwards you can always create the spare area partition, format it to TRIM it, then delete the partition. Finally, this method of creating spare area works on the drives we've tested here but not all controllers may behave the same way.

The first set of graphs shows the performance data over the entire 2000 second test period. In these charts you'll notice an early period of very high performance followed by a sharp dropoff. What you're seeing in that case is the drive allocating new blocks from its spare area, then eventually using up all free blocks and having to perform a read-modify-write for all subsequent writes (write amplification goes up, performance goes down).

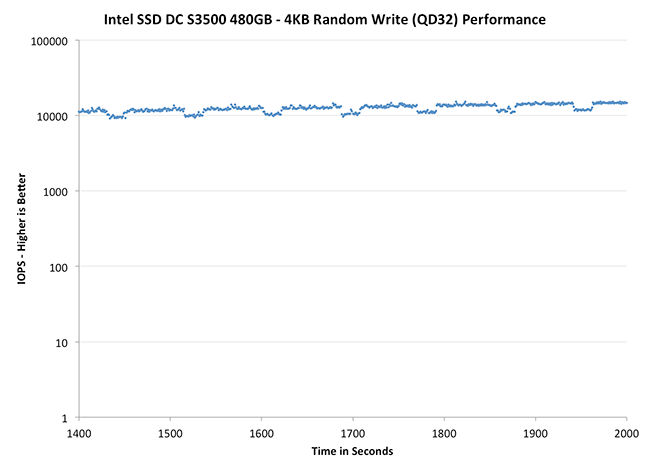

The second set of graphs zooms in to the beginning of steady state operation for the drive (t=1400s). The third set also looks at the beginning of steady state operation but on a linear performance scale. Click the buttons below each graph to switch source data.

|

|||||||||

| Intel SSD DC S3500 480GB | Intel SSD DC S3700 200GB | Seagate 600 Pro 400GB | |||||||

| Default | |||||||||

While it's not quite as pretty of a curve as what we saw with the S3700, the two drives are clearly related. Intel's SSD DC S3500 delivers incredibly predictable performance. The biggest take away is that Intel is able to still deliver good performance consistency even with much less NAND spare area than on the S3700. Architecture enables what we see here, not just spare area.

|

|||||||||

| Intel SSD DC S3500 480GB | Intel SSD DC S3700 200GB | Seagate 600 Pro 400GB | |||||||

| Default | |||||||||

Remember this predictable little pattern? The periodic dips in performance are Intel's defrag/GC routines operating at regular (and frequent) intervals. You'll also notice the slight upward trend here; the S3500 is looking to improve its performance over time, even under heavy load, without sacrificing consistency.

|

|||||||||

| Intel SSD DC S3500 480GB | Intel SSD DC S3700 200GB | Seagate 600 Pro 400GB | |||||||

| Default | |||||||||

This zoomed in view really gives us great perspective as to what's going on. I included comparable data from other drives in the S3700 review, but the size/scales of those charts made inclusion here not possible in short form.

54 Comments

View All Comments

toyotabedzrock - Thursday, June 13, 2013 - link

If my math is correct, excluding the spare area, this mlc can only be written to 700 times?ShieTar - Friday, June 14, 2013 - link

Your math is unrealistically simplified. You could fill up 75% of the drive with data that you never change, so then you can write the remaining 25% of space 2800 times before you reach the 450TB written.Also, Intel only want to guarantee 450TB written. That could still mean that the average drive survives much longer, it just is not meant as a major selling point for this drive.

jhh - Friday, June 14, 2013 - link

I don't understand why the review says latency measurements are done, when the chart shows IOPS. Latency is measured in milliseconds, not IOPS. I want to know how long it takes for the drive to complete an operation after it gets the command. Even more interesting is how that measurement changes as the queue is bigger or smaller. Any chance of getting measurements like this?I'm not sure how this works in Windows, but in Linux, when an application wants to be sure data is persistently stored, this operation translates into a filesystem barrier, which does not return until the drive has written the data (or stored it in a place where it's safe from power failure). The faster the barrier completes, the faster the application runs. This is why I would like to know latency in milliseconds. While IOPS has its value, so does milliseconds.

mk8 - Tuesday, January 14, 2014 - link

Anand, I think one thing that you don't mention at all in the article is IF the S3500 needs or benefits of over provisioning. I guess the performance benefits would be minor, but what about write amplification? I look forward for the "Part 2" of the article. Thanks