Corsair's Force SSD Reviewed: SF-1200 is Very Good

by Anand Lal Shimpi on April 14, 2010 2:27 AM ESTPower - A Telling Story

I'm quietly expanding our SSD test suite. I haven't made the results public but you'll see them in the coming months appear in articles and in Bench. One of my tests happens to be a power consumption test where I measure how much power the drive itself requires during a few scenarios. The results with the SandForce SSDs in particular were fascinating enough for me to unveil some of these numbers a bit earlier than I'd originally planned.

In an SSD you have two main consumers of power: the controller, any external DRAM and the NAND. Now as long as you're not bound by the speed of the controller, the biggest consumer of power should be the NAND itself. Now here's where things get interesting. SandForce's DuraWrite technology should mean that there's far less writing to NAND going on in the Corsair Force drive compared to more conventional SSDs. Unless the controller consumes an absurd amount of power, we should see this reflected in the power numbers.

Note that I am not running with Device Initiated Power Management enabled, which is disabled by default in desktop installations of Windows. Power consumption in a notebook will be lower on drives that support it but I'll save that for another article.

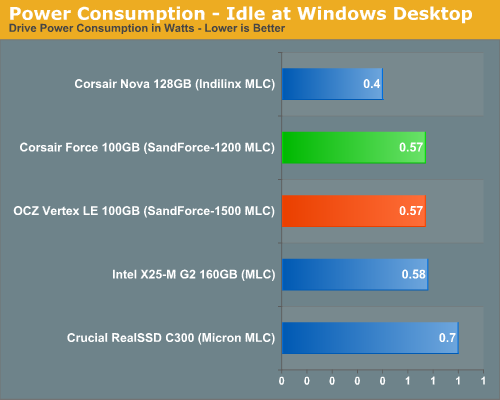

At idle the two SF drives and Intel's X25-M G2 consume roughly the same power. Crucial's C300 is a bit more power hungry while the Indilinx based Nova is noticeably lower.

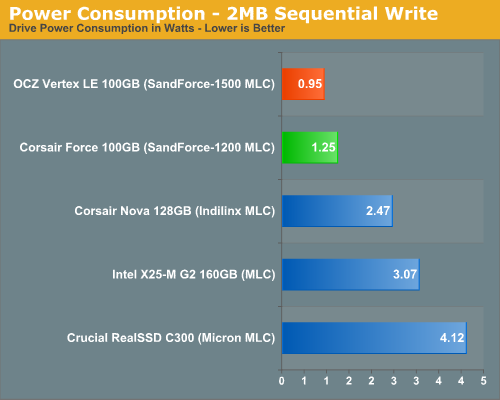

The highest power draw scenario is a sequential write test. The NAND is being written to as quickly as possible and thus power consumption is at its highest. Here we have proven our hypothesis. The SandForce drives are writing less than the competition and thus their power consumption is less than half of the Intel and Crucial drives. Based on the power numbers alone I'd say that SandForce's compression is working extremely well in this test possibly only writing about half as much data to the NAND itself. In practice this means the controller has less to track, the NAND has a longer lifespan and performance is very competitive.

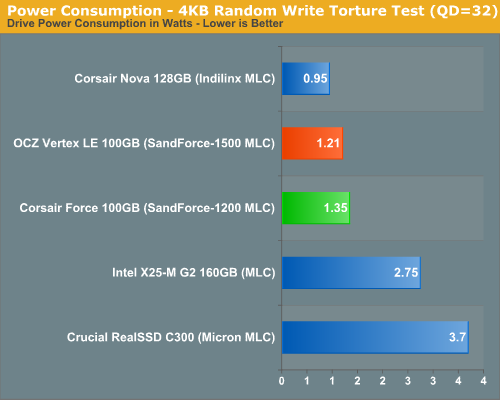

In our random write test, the power gap between the SF and Intel drives narrows but not tremendously. The Indilinx drive actually beats out the SF offerings but I have a feeling that's because we're actually more controller bound here and the data just isn't getting out to NAND.

Another curious observation is the fact that the SF-1200 based Corsair Force actually draws more power than OCZ's Vertex LE. It's not noticeable in real world desktop use, but it's odd. I wonder if the SF-1200s are really just higher yielding/lower binned SF-1500s? Perhaps they draw more power as a result?

The Test

| CPU | Intel Core i7 965 running at 3.2GHz (Turbo & EIST Disabled) |

| Motherboard: | Intel DX58SO (Intel X58) |

| Chipset: | Intel X58 + Marvell SATA 6Gbps PCIe |

| Chipset Drivers: | Intel 9.1.1.1015 + Intel IMSM 8.9 |

| Memory: | Qimonda DDR3-1333 4 x 1GB (7-7-7-20) |

| Video Card: | eVGA GeForce GTX 285 |

| Video Drivers: | NVIDIA ForceWare 190.38 64-bit |

| Desktop Resolution: | 1920 x 1200 |

| OS: | Windows 7 x64 |

63 Comments

View All Comments

Anand Lal Shimpi - Wednesday, April 14, 2010 - link

That I'm not sure of, the 2008 Iometer build is supposed to use a fairly real world inspired data set (Intel helped develop the random algorithm apparently) and the performance appears to be reflected in our real world tests (both PCMark Vantage and our Storage Bench).That being said, SandForce is apparently working on their own build of Iometer that lets you select from all different types of source data to really stress the engine.

Also keep in mind that the technology at work here is most likely more than just compression/data deduplication.

Take care,

Anand

keemik - Wednesday, April 14, 2010 - link

Call me anal, but I am still not happy with the response ;)Maybe the first 4k block is filled with random data, but then that block is used over and over again.

That random read/write performance is too good to be true.

Per Hansson - Wednesday, April 14, 2010 - link

Just curious about the missing capacitor, will there not be a big risk of dataloss incase of power outage?Do you know what design changes where done to get rid of the capacitor, where any additional components other than the capacitor removed?

Because it can be bought in low quantities for a quite ok retail price of £16.50 here;

http://www.tecategroup.com/ultracapacitors/product...

bluorb - Wednesday, April 14, 2010 - link

A question: if the controller is using lossless compression in order to write less data, is it not possible to say that the drive work volume is determined by the type of information written to it?Example: if user x data can be routinely compressed at a 2 to 1 ratio then it can be said that for this user the drive work volume is 186GB and cost per GB is 2.2$.

Am I on to something or completely of the track ?

semo - Wednesday, April 14, 2010 - link

this compression is detectable by the OS. As the name suggests (DuraWrite) it is there to reduce the wear on the drive which can also give better performance but not extra capacity.ptmixer - Wednesday, April 14, 2010 - link

I'm also wondering about the capacity on these SandForce drives. It seems the actual capacity is variable depending on the type of data stored. If the drive has 128 GB of flash, 93.1 usable after spare area, then that must be the amount of compressed/thinned data you can store, so the amount of 'real' data should be much more.. thereby helping the price/GB of the drive.For example, if the drive is partly used and your OS says it has 80 GB available, then you store 10 GB of compressible data on it, won't it then report that it perhaps still has 75 GB available (rather than 70 GB as on a normal drive)? Anand -- help us with our confusion!

ps - thanks for all the great SSD articles! Could you also continue to speculate how well a drive will work on a non trim-enabled system, like OS X, or as a ESXi Datastore?

JarredWalton - Wednesday, April 14, 2010 - link

I commented on this in the "This Just In" article, but to recap:In terms of pure area used, Corsair sets aside 27.3% of the available capacity. However, with DuraWrite (i.e. compression) they could actually have even more spare area than 35GiB. You're guaranteed 93GiB of storage capacity, and if the data happens to compress better than average you'll have more spare area left (and more performance) while with data that doesn't compress well (e.g. movies and JPG images) you'll get less spare area remaining.

So even at 0% compression you'd still have at least 35GiB of spare and 93GiB of storage, but with an easily achievable 25% compression average you would have as much as ~58GiB of spare area (45% of the total capacity would be "spare"). If you get an even better 33% compression you'd have 66GiB of spare area (51% of total capacity), etc.

KaarlisK - Wednesday, April 14, 2010 - link

Just resize the browser window.Margins won't help if you have a 1920x1080 screen anyway.

RaistlinZ - Wednesday, April 14, 2010 - link

I don't see a reason to opt for this over the Crucial C300 drive, which performs better overall and is quite a bit cheaper per GB. Yes, these use less power but I hardly see that as a determining factor for people running high-end CPU's and video cards anyway.If they can get the price down to $299 then I may give it a look. But $410 is just way too expensive considering the competition that's out there.

Chloiber - Wednesday, April 14, 2010 - link

I did test it. If you create the test file it compressable to 0 percent of its original size.But if you write sequential or random data to the file you can't compress it at all. So i think that iometer uses random data for the tests. Of course this is a critical point when testing such drives and I am sure that anand did test it too before doing the tests. I hope so at least ;)