The Inland Performance Plus 2TB SSD Review: Phison's E18 NVMe Controller Tested

by Billy Tallis on May 13, 2021 8:00 AM ESTAdvanced Synthetic Tests

Our benchmark suite includes a variety of tests that are less about replicating any real-world IO patterns, and more about exposing the inner workings of a drive with narrowly-focused tests. Many of these tests will show exaggerated differences between drives, and for the most part that should not be taken as a sign that one drive will be drastically faster for real-world usage. These tests are about satisfying curiosity, and are not good measures of overall drive performance. For more details, please see the overview of our 2021 Consumer SSD Benchmark Suite.

Whole-Drive Fill

|

|||||||||

| Pass 1 | |||||||||

| Pass 2 | |||||||||

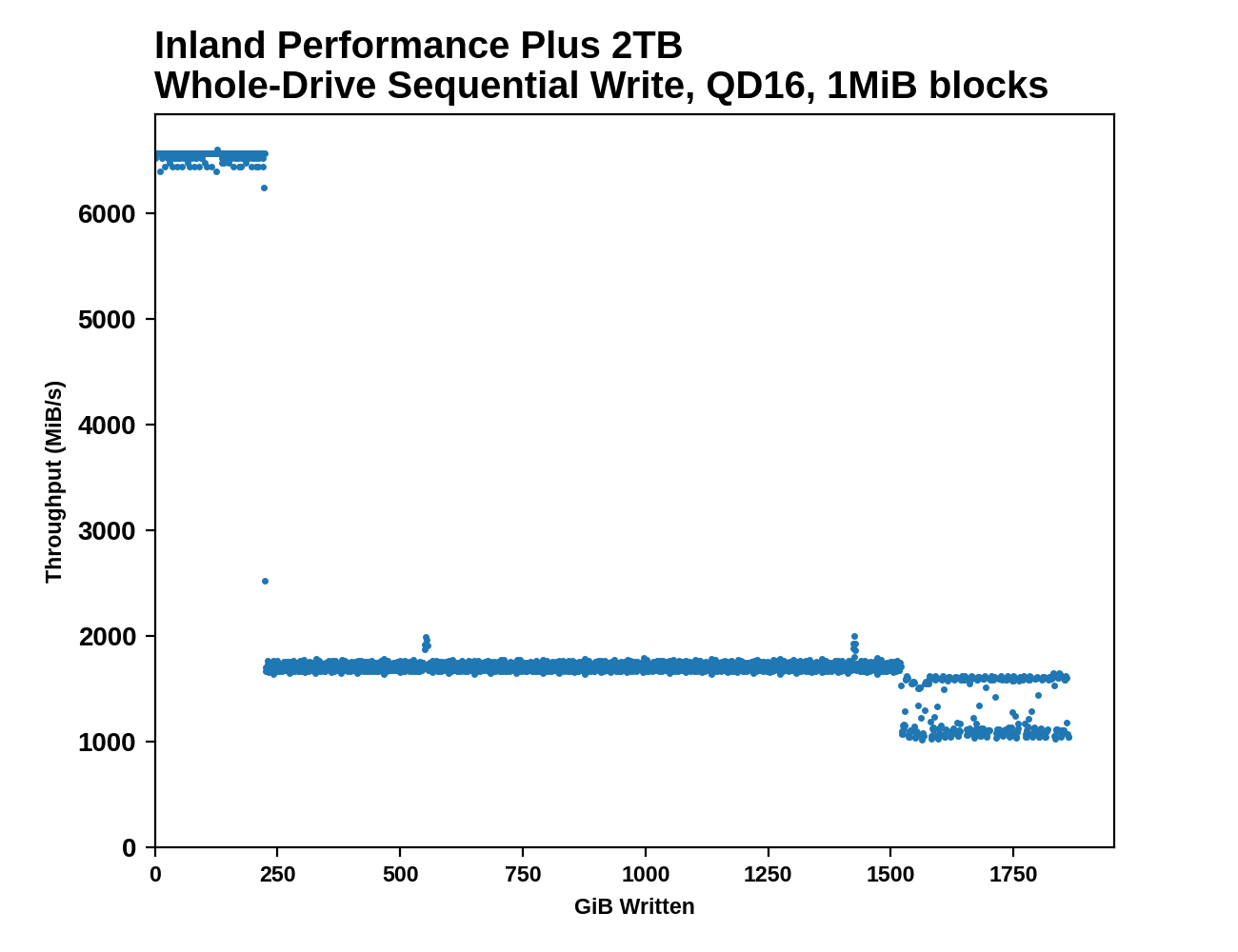

The SLC write cache on the 2TB Inland Performance Plus lasts for about 225GB on first pass (about the same cache size as 980 PRO, but a bit faster), and about 55GB on the second pass when the drive is already full. Performance during each phase of filling the drive is quite consistent, with the only significant variability showing up after the drive is 80% full. Sequential write performance during the SLC cache phase is higher than any other drive we've tested to date.

|

|||||||||

| Average Throughput for last 16 GB | Overall Average Throughput | ||||||||

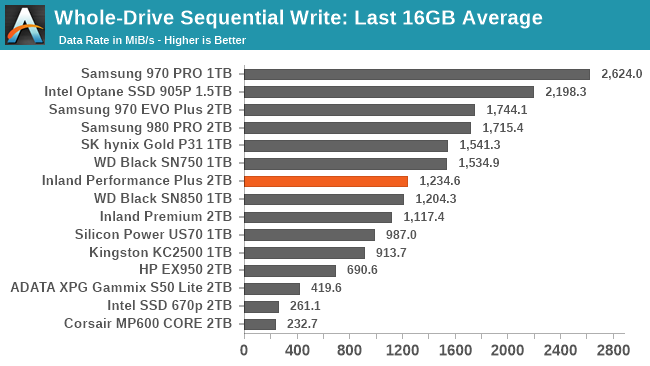

The post-cache performance is a bit slower than the fastest TLC drives, but overall average throughput is comparable to other top TLC drives. The Inland Performance Plus is still significantly slower than the MLC and Optane drives that didn't need a caching layer, but one or two more generational improvements in NAND performance may be enough to overcome that difference.

Working Set Size

|

|||||||||

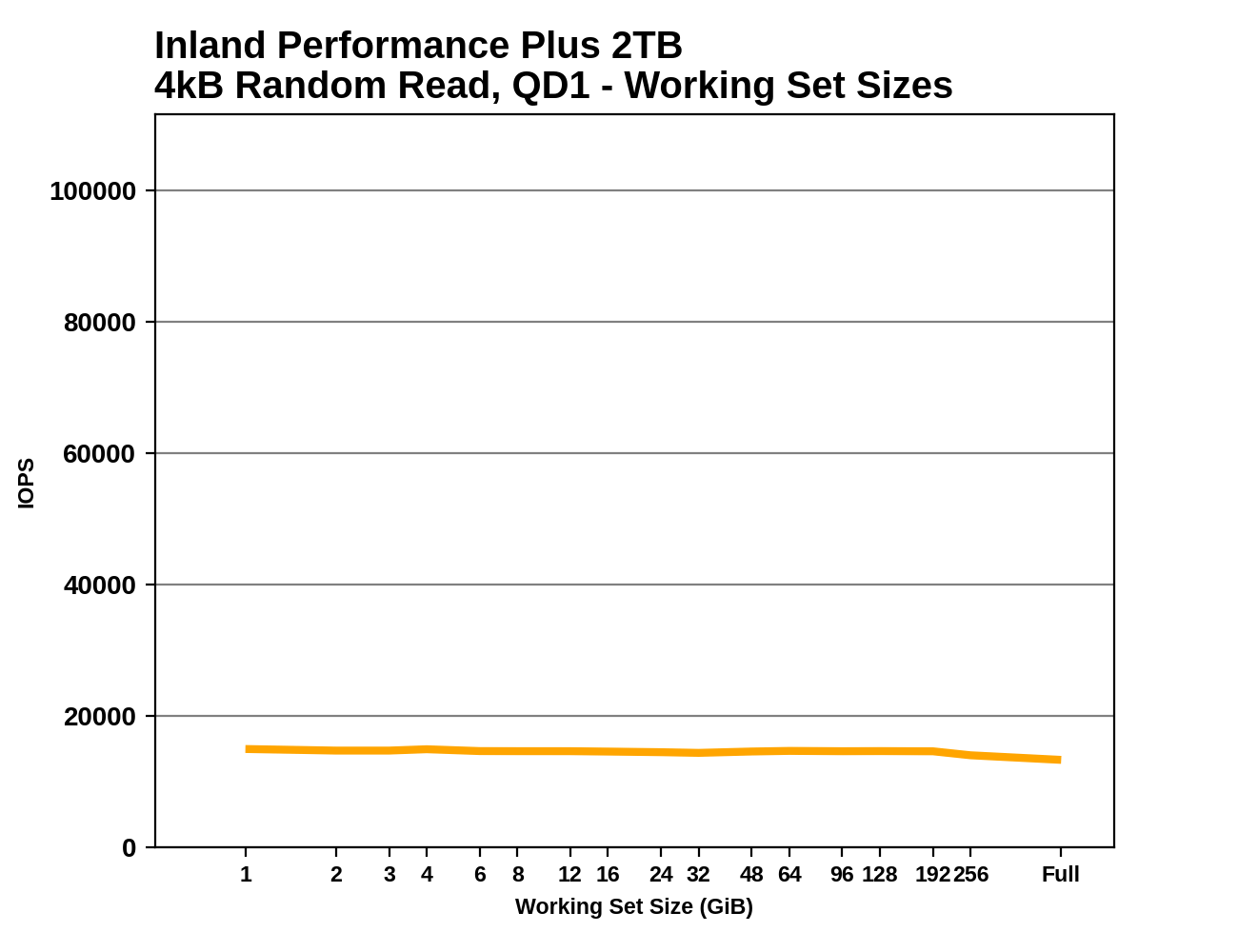

As expected from a high-end drive with a full-sized DRAM buffer, the random read latency from the Inland Performance Plus is nearly constant regardless of the working set size. There's a slight drop in performance when random reads are covering the entire range of the drive, but it's smaller than the drop we see from drives that skimp on DRAM.

Performance vs Block Size

|

|||||||||

| Random Read | |||||||||

| Random Write | |||||||||

| Sequential Read | |||||||||

| Sequential Write | |||||||||

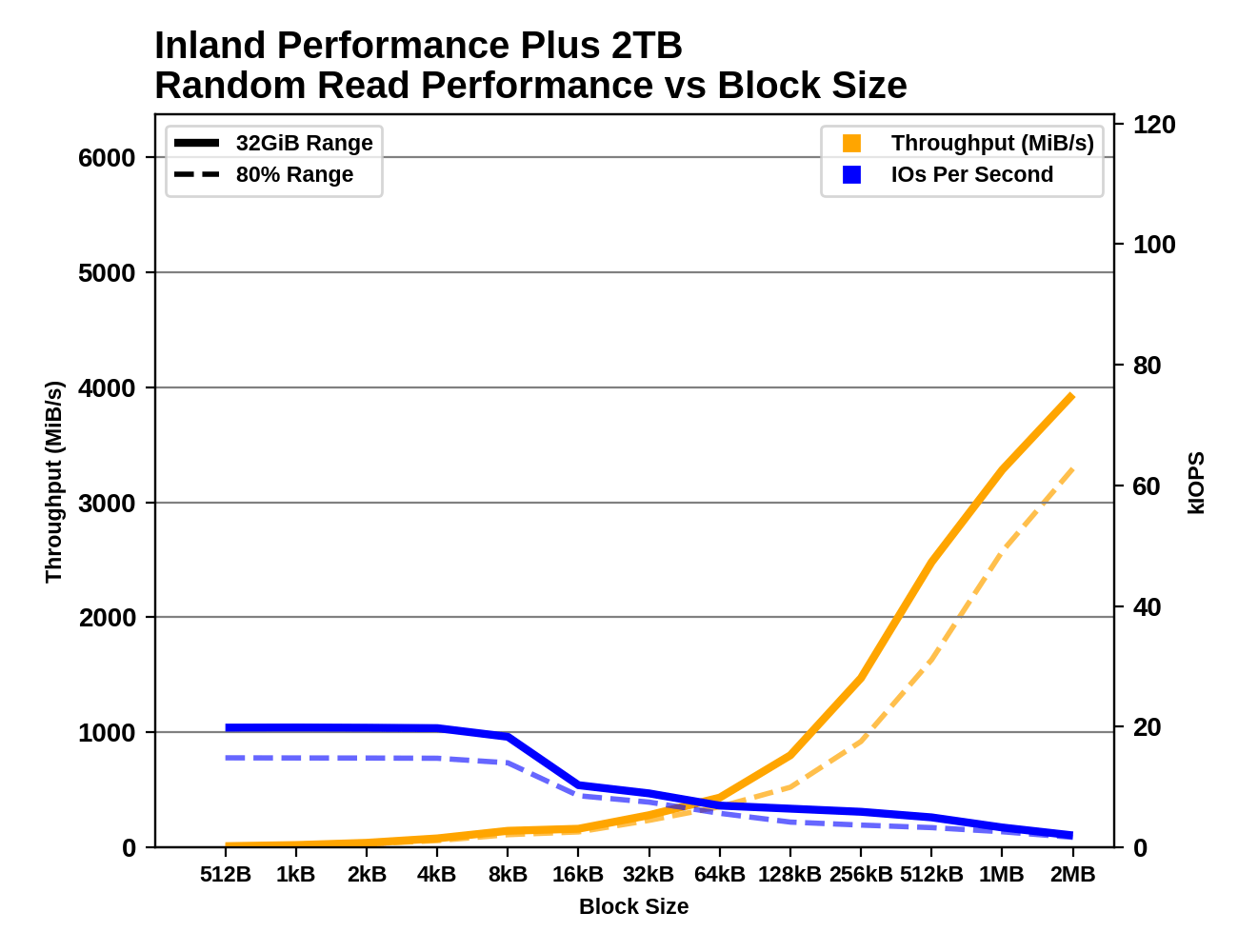

There are no big surprises from testing the Inland Performance Plus with varying block sizes. The Phison E18 controller has no problem handling block sizes smaller than 4kB. The random write results are a little rough especially when testing the drive at 80% full, but it's hardly the only drive to have SLC cache troubles here. Like many other drives, the sequential read performance doesn't scale smoothly with the larger block sizes, and the drive really needs a larger queue depth or very large block size to deliver great sequential read performance.

118 Comments

View All Comments

mode_13h - Sunday, May 16, 2021 - link

> programs were doing their own thing, till OS's began to clamp down.DOS was really PCs' biggest Achilles heel. It wasn't until Windows 2000 that MS finally offered a mainstream OS that really provided all the protections available since the 386 (some, even dating back to the 286).

Even then, it took them 'till Vista to figure out that ordinary users having admin privileges was a bad idea.

In the Mac world, Apple was doing even worse. I was shocked to learn that MacOS had *no* memory protection until OS X! Of course, OS X is BSD-derived and a fully-decent OS.

FunBunny2 - Monday, May 17, 2021 - link

" I was shocked to learn that MacOS had *no* memory protection until OS X! "IIRC, until Apple went the *nix way, it was just co-operative multi-tasking, which is worth a box of Kleenex.

Oxford Guy - Tuesday, May 18, 2021 - link

Apple had protected memory long before Microsoft did — and before Motorola had made a non-buggy well-functioning MMU to get it working at good speed.One of the reasons the Lisa platform was slow was because Apple has to kludge protected memory support.

The Mac was originally envisioned as a $500 home computer, which was just above toy pricing in those days. It wasn’t designed to be a minicomputer on one’s desk like the Lisa system, which also had a bunch of other data-safety features like ECC and redundant storage of file system data/critical files — for hard disks and floppies.

The first Mac had a paltry amount of RAM, no hard disk support, no multitasking, no ECC, no protected memory, worse resolution, a poor-quality file system, etc. But, it did have a GUI that was many many years ahead of what MS showed itself to be capable of producing.

mode_13h - Tuesday, May 18, 2021 - link

> Apple had protected memory long before Microsoft didI mean in a mainstream product, enabled by default. Through MacOS 8, Apple didn't even enable virtual memory by default!

> The first Mac

I'm not talking about the first Mac. I'm talking about the late 90's, when Macs were PowerPC-based and MS had Win 9x & NT 4. Linux was already at 2.x (with SMP-support), BeOS was shipping, and OS/2 was sadly well on its way out.

mode_13h - Sunday, May 16, 2021 - link

> C has been described as the universal assembler.It was created as a cross-platform alternative to writing operating systems in assembly language!

> a C program can be blazingly fast, if the code treats the machine as a Control Program would.

No, that's just DOS. C came out of the UNIX world, where C programs are necessarily as well-behaved as anything else. The distinction you're thinking of is really DOS vs. real operating systems!

> I'm among those who spent more time than I wanted, editing with Norton Disk Doctor.

That's cuz you be on those shady BBS' dog!

mode_13h - Sunday, May 16, 2021 - link

> I think there's been a view inculcated against C++C++ is a messy topic, because it's been around for so long. It's a litle hard to work out what someone means by it. STL, C++11, and generally modern C++ style have done a lot to alleviate the grievances many had with it. Before the template facility worked well, inheritance was the main abstraction mechanism. That forced more heap allocations, and the use of virtual functions often defeated compilers' ability to perform function inlining.

It's still the case that C++ tends to hide lots of heap allocations. Where a C programmer would tend to use stack memory for string buffers (simply because its easiest), the easiest thing in C++ is basically to put it on the heap. Now, an interesting twist is that heap overrun bugs are both easier to find and less susceptible to exploits than on stack. So, what used to be seen as a common inefficiency of C++ code is now regarded as providing reliability and security benefits.

Another thing I've noticed about C code is that it tends to do a lot of work in-place, whereas C++ does more copying. This makes C++ easier to debug, and compilers can optimize away some of those copies, but it does work to the benefit of C. The reason is simple: if a C programmer wants to copy anything beyond a built-in datatype, they have to explicitly write code to do it. In C++ the compiler generally emits that code for you.

The last point I'll mention is restricted pointers. C has them (since C99), while C++ left them out. Allegedly, nearly all of the purported performance benefits of Fortran disappear, when compared against C written with restricted pointers. That said, every C++ compiler I've used has a non-standard extension for enabling them.

> if C++, do things in an excessive object-oriented way

Before templates came into more common use, and especially before C++11, you would typcially see people over-relying on inheritance. Since then, it's a lot more common to see functional-style code. When the two styles are mixed judiciously, the combination can be very powerful.

GeoffreyA - Monday, May 17, 2021 - link

Yes! I was brought up like that, using inheritance, though templates worked as well. Generally, if a class had some undefined procedure, it seemed natural to define it as a pure virtual function (or even a blank body), and let the inherited class define what it did. Passing a function object, using templates, was possible but felt strange. And, as you said, virtual functions came at a cost, because they had to be resolved at run-time.Concerning allocation on the heap, oh yes, another concern back then because of its overhead. Arrays on the stack are so fast (and combine those buggers with memcpy or memmove, and one's code just burns). I first started off using string classes, but as I went on, switched to char/wchar_t buffers as much as possible---and that meant you ended up writing a lot of string functions to do x, y, z. And learning about buffer overruns, had to go back and rewrite everything, so buffer sizes were respected. (Unicode brought more hassle too.)

"whereas C++ does more copying"

I think it's a tendency in C++ code, too much is returned by value/copy, simply because of ease. One can even be guilty of returning a whole container by value, when the facility is there to pass by reference or pointer. But I think the compiler can optimise a lot of that away. Still, not good practice.

mode_13h - Tuesday, May 18, 2021 - link

> though templates worked as wellIt actually took a while for compilers (particularly MSVC) to be fully-conformant in thier template implementations. That's one reason they took longer to catch on -- many programmers had gotten burned in early attempts to use templates.

> Passing a function object, using templates, was possible but felt strange.

Templates give you another way to factor out common code, so that you don't have to force otherwise unrelated data types into an inheritance relationship.

> I think it's a tendency in C++ code, too much is returned by value/copy, simply because of ease.

Oh yes. It's clean, side effect-free and avoids questions about what happens to any existing container elements.

> One can even be guilty of returning a whole container by value, when the facility is there

> to pass by reference or pointer. But I think the compiler can optimise a lot of that away.

It's called (N)RVO and C++11 took it to a new level, with the introduction of move-constructors.

> Still, not good practice.

In a post-C++11 world, it's now preferred. The only time I avoid it is when I need a function to append some additional values to a container. Then, it's most efficient to pass in a reference to the container.

GeoffreyA - Wednesday, May 19, 2021 - link

"many programmers had gotten burned in early attempts to use templates"It could be tricky getting them to work with classes and compile. If I remember rightly, the notation became quite unwieldy.

"C++11 took it to a new level, with the introduction of move-constructors"

Interesting. I suppose those are the counterparts of copy constructors for an object that's about to sink into oblivion. Likely, just a copying over of the pointers (or of all the variables if the compiler handles it)?

mode_13h - Thursday, May 20, 2021 - link

> > "many programmers had gotten burned in early attempts to use templates"> It could be tricky getting them to work with classes and compile.

I meant that early compiler implementations of C++ templates were riddled with bugs. After people started getting bitten by some of these bugs, I think templates got a bad reputation, for a while.

Apart from that, it *is* a complex language feature that probably could've been done a bit better. Most people are simply template consumers and maybe write a few simple ones.

If you really get into it, templates can do some crazy stuff. Looking up SFINAE will quickly take you down the rabbit hole.

> If I remember rightly, the notation became quite unwieldy.

I always used a few typedefs, to deal with that. Now, C++ expanded the "using" keyword to serve as a sort of templatable typedef. The repurposed "auto" keyword is another huge help, although some people definitely use it too liberally.