A Look At Triple-GPU Performance And Multi-GPU Scaling, Part 1

by Ryan Smith on April 3, 2011 7:00 AM ESTThe Test, Power, Temps, and Noise

| CPU: | Intel Core i7-920 @ 3.33GHz |

| Motherboard: | Asus Rampage II Extreme |

| Chipset Drivers: | Intel 9.1.1.1015 (Intel) |

| Hard Disk: | OCZ Summit (120GB) |

| Memory: | Patriot Viper DDR3-1333 3x2GB (7-7-7-20) |

| Video Cards: |

AMD Radeon HD 6990 AMD Radeon HD 6970 PowerColor Radeon HD 6970 EVGA GeForce GTX 590 Classified NVIDIA GeForce GTX 580 Zotac GeForce GTX 580 |

| Video Drivers: |

NVIDIA ForceWare 266.58 AMD Catalyst 11.4 Preview |

| OS: | Windows 7 Ultimate 64-bit |

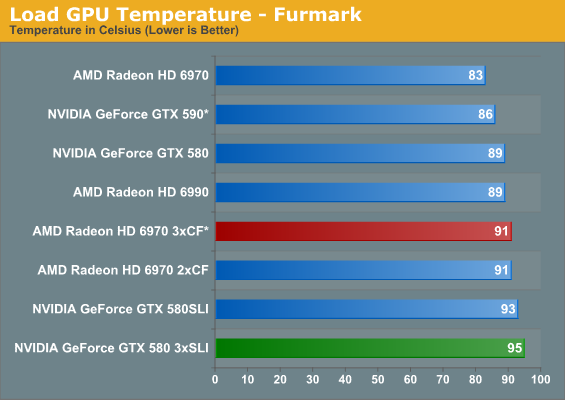

With that out of the way, let’s start our look at power, temperature, and noise. We did include our jury-rigged triple-CF setup in these results for the sake of a comparison point, but please keep in mind that we’re not using a viable long-term setup, which is why we have starred the results. These results also include the GTX 590 from last week, which has its own handicap under FurMark due to NVIDIA’s OCP. This does not apply to the triple SLI setup, which we can bypass OCP on.

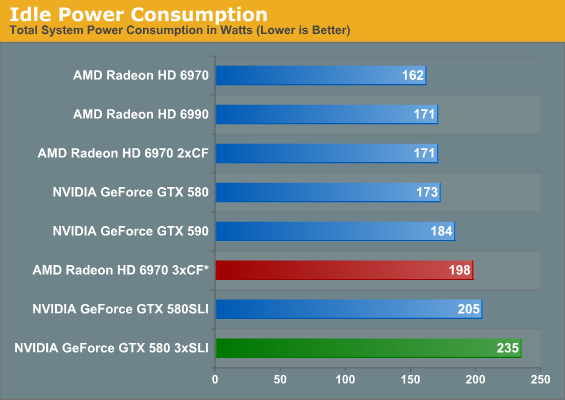

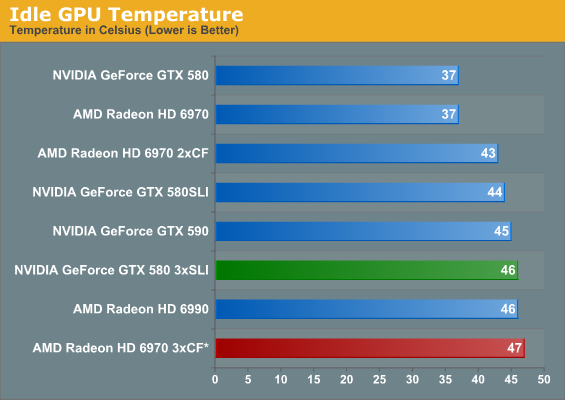

Given NVIDIA’s higher idle TDP, there shouldn’t be any surprises here. Three GTX 580s in SLI makes for a fairly wide gap of 37W – in fact even two GTX 580s in SLI is still 7W more than the triple 6970 setup. Multi-GPU configurations are always going to be a limited market opportunity, but if it were possible to completely power down unused GPUs, it would certainly improve the idle numbers.

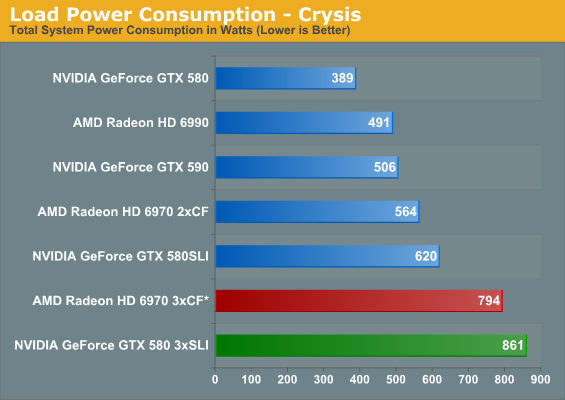

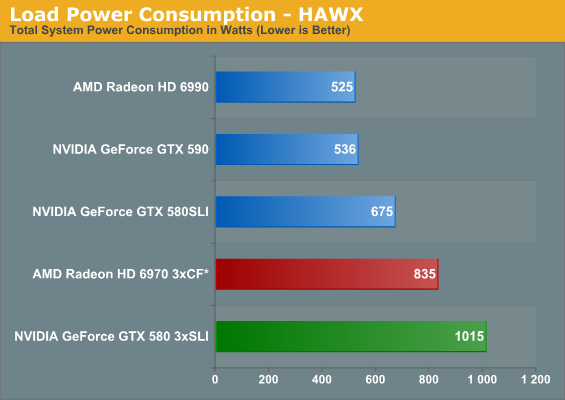

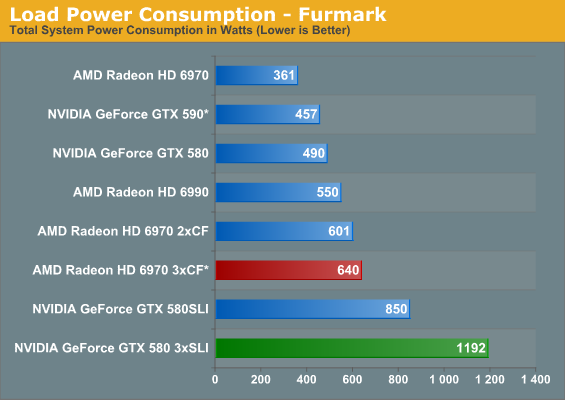

With up to three GPUs, power consumption under load gets understandably high. For FurMark in particular we see the triple GTX 580 setup come just shy of 1200W due to our disabling of OCP – it’s an amusingly absurd number. Meanwhile the triple 6970 setup picks up almost nothing over the dual 6970, which is clearly a result of AMD’s drivers not having a 3-way CF profile for FurMark. Thus the greatest power load we can place on the triple 6970 is under HAWX, where it pulls 835W.

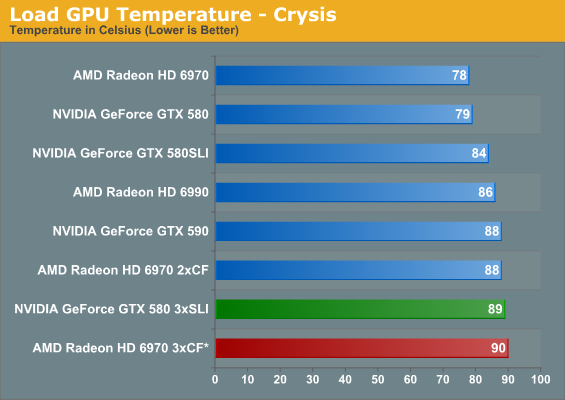

With three cards packed tightly together the middle card ends up having the most difficult time, so it’s that card which is setting the highest temperatures here. Even with that, idle temperatures only tick up a couple of degrees in a triple-GPU configuration.

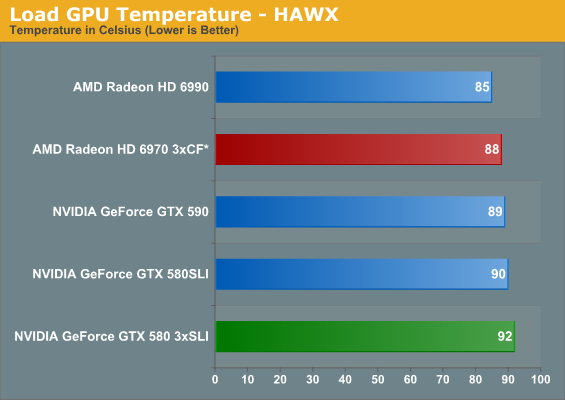

Even when we forcibly wedge the 6970s apart, the triple 6970 setup still ends up being the warmest under Crysis – this being after Crysis temperatures dropped 9C from the separation. Meanwhile the triple GTX 580 gets quite warm on its own, but under Crysis and HAWX it’s nothing we haven’t seen before. FurMark is the only outlier here, where temperatures stabilized at 95C, 2C under GF110’s thermal threshold. It’s safe, but I wouldn’t recommend running FurMark all day just to prove it.

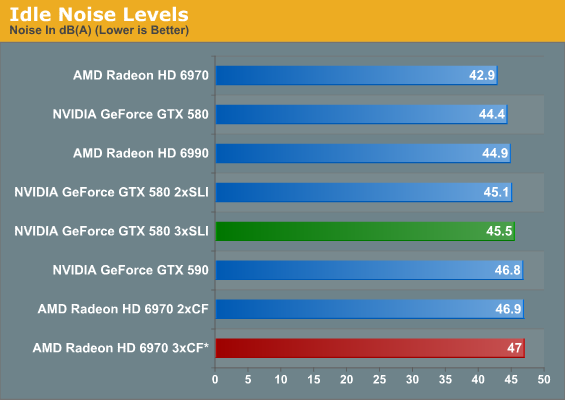

With a 3rd card in the mix idle noise creeps up some, but much like idle temperatures it’s not significantly more. For some perspective though, we’re still looking at idle noise levels equivalent to the GTX 560 Ti running FurMark, so it’s by no means a silent operation.

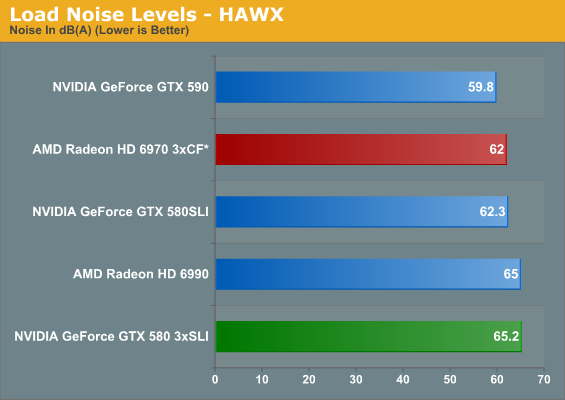

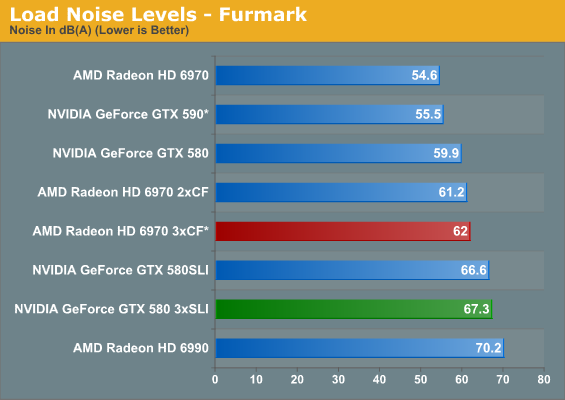

It turns out adding a 3rd card doesn’t make all that much more noise. Under HAWX the GTX 580 does get 3dB louder, but under FurMark the difference is under a dB. The triple 6970 setup does better under both situations, but that has more to do with our jury-rigging and the fact that FurMark doesn’t scale with a 3rd AMD GPU. Amusingly the triple 580 setup is still quieter under FurMark than the 6990 by nearly 3dB even though we’ve disabled OCP for the GTX 580, and for HAWX the difference is only .2dB in AMD’s favor. It’s simply not possible to do worse than the 6990 without overvolting/overclocking, it seems.

97 Comments

View All Comments

masterchi - Sunday, April 3, 2011 - link

"Going from 1 GPU to 2 GPUs also gives AMD a minor advantage, with the average gain being 182% for NVIDIA versus 186% for AMD"This should be 78.6 and 87.7, respectively.

Ryan Smith - Sunday, April 3, 2011 - link

To be clear, there I'm only including the average FPS (and not the min FPS) of all the games except HAWX (CPU limited). Performance is as the numbers indicate, 182% and 186% of a single card's performance respectively.eddman - Sunday, April 3, 2011 - link

Doesn't make sense. Are you saying that, for example, 580 SLI is 2.77 times faster than a single 580?SagaciousFoo - Sunday, April 3, 2011 - link

Think of it this way: A single 580 card is 100% performance. Two cards equals 186% performance. So the SLI setup is 14% shy of being 2x the performance of a single card.AnnihilatorX - Sunday, April 3, 2011 - link

This is typical percentile jargon. It's author's miss really.When you say 186% average gain, you mean 2.86 times the performance.

When you say 86% average gain, you mean 186% the performance, and that's what you mean.

The keyword gain there ais unecessary and misleading.

80% increase -> x 1.8

180% increase -> x 2.8

SlyNine - Sunday, April 3, 2011 - link

Correct, it should read, 186% of (multiplication) a single card.But I am making the assumption that gain means addition.

DaFox - Sunday, April 3, 2011 - link

That's unfortunately how it works when it come to multi GPU scaling. Ryan is just continuing the standard trend set by everyone else in the industry.Bremen7000 - Sunday, April 3, 2011 - link

No, he's just using misleading wording. The chart should either read "Performance: 186%" or "Performance gain: 86%", etc. This isn't hard.sigmatau - Monday, April 4, 2011 - link

The OP is correct. You cannot use "gain" and include the 100% of the first card. This is simple percentages.If I have one gallon of gas in my car and add one gallon, I gain 100%.

I also have a total of 200% of what I had at the start.

Kaboose - Sunday, April 3, 2011 - link

Finally, it has taken awhile but finally and thank you!!! Multi-monitor is exactly what we need in these reviews, especially the 6990 and 590 reviews.