The DirectX 12 Performance Preview: AMD, NVIDIA, & Star Swarm

by Ryan Smith on February 6, 2015 2:00 PM EST- Posted in

- GPUs

- AMD

- Microsoft

- NVIDIA

- DirectX 12

Star Swarm & The Test

For today’s DirectX 12 preview, Microsoft and Oxide Games have supplied us with a newer version of Oxide’s Star Swarm demo. Originally released in early 2014 as a demonstration of Oxide’s Nitrous engine and the capabilities of Mantle, Star Swarm is a massive space combat demo that is designed to push the limits of high-level APIs and demonstrate the performance advantages of low-level APIs. Due to its use of thousands of units and other effects that generate a high number of draw calls, Star Swarm can push over 100K draw calls, a massive workload that causes high-level APIs to simply crumple.

Because Star Swarm generates so many draw calls, it is essentially a best-case scenario test for low-level APIs, exploiting the fact that high-level APIs can’t effectively spread out the draw call workload over several CPU threads. As a result the performance gains from DirectX 12 in Star Swarm are going to be much greater than most (if not all) video games, but none the less it’s an effective tool to demonstrate the performance capabilities of DirectX 12 and to showcase how it is capable of better distributing work over multiple CPU threads.

It should be noted that while Star Swarm itself is a synthetic benchmark, the underlying Nitrous engine is relevant and is being used in multiple upcoming games. Stardock is using the Nitrous engine for their forthcoming Star Control game, and Oxide is using the engine for their own game, set to be announced at GDC 2015. So although Star Swarm is still a best case scenario, many of its lessons will be applicable to these future games.

As for the benchmark itself, we should also note that Star Swarm is a non-deterministic simulation. The benchmark is based on having two AI fleets fight each other, and as a result the outcome can differ from run to run. The good news is that although it’s not a deterministic benchmark, the benchmark’s RTS mode is reliable enough to keep the run-to-run variation low enough to produce reasonably consistent results. Among individual runs we’ll still see some fluctuations, while the benchmark will reliably demonstrate larger performance trends.

The Test

For today’s preview Microsoft, NVIDIA, and AMD have provided us with the necessary WDDM 2.0 drivers to enable DirectX 12 under Windows 10. The NVIDIA driver is 349.56 and the AMD driver is 15.200. At this time we do not know when these early WDDM 2.0 drivers will be released to the public, though we would be surprised not to see them released by the time of GDC in early March.

In terms of bugs and other known issues, Microsoft has informed us that there are some known memory and performance regressions in the current WDDM 2.0 path that have since been fixed in interim builds of Windows. In particular the WDDM 2.0 path may see slightly lower performance than the WDDM 1.3 path for older drivers, and there is an issue with memory exhaustion. For this reason Microsoft has suggested that a 3GB card is required to use the Star Swarm DirectX 12 binary, although in our tests we have been able to run it on 2GB cards seemingly without issue. Meanwhile DirectX 11 deferred context support is currently broken in the combination of Star Swarm and NVIDIA's drivers, causing Star Swarm to immediately crash, so these results are with D3D 11 deferred contexts disabled.

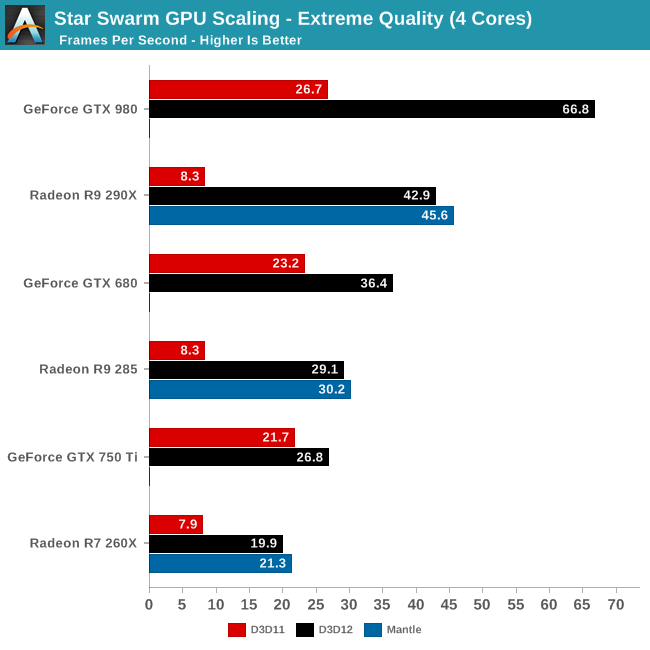

For today’s article we are looking at a small range of cards from both AMD and NVIDIA to showcase both performance and compatibility. For NVIDIA we are looking at the GTX 980 (Maxwell 2), GTX 750 Ti (Maxwell 1), and GTX 680 (Kepler). For AMD we are looking at the R9 290X (GCN 1.1), R9 285 (GCN 1.2), and R9 260X (GCN 1.1). As we mentioned earlier support for Fermi and GCN 1.0 cards will be forthcoming in future drivers.

Meanwhile on the CPU front, to showcase the performance scaling of Direct3D we are running the bulk of our tests on our GPU testbed with 3 different settings to roughly emulate high-end Core i7 (6 cores), i5 (4 cores), and i3 (2 cores) processors. Unfortunately we cannot control for our 4960X’s L3 cache size, however that should not be a significant factor in these benchmarks.

| DirectX 12 Preview CPU Configurations (i7-4960X) | |||

| Configuration | Emulating | ||

| 6C/12T @ 4.2GHz | Overclocked Core i7 | ||

| 4C/4T @ 3.8GHz | Core i5-4670K | ||

| 2C/4T @ 3.8GHz | Core i3-4370 | ||

Though not included in this preview, AMD’s recent APUs should slot between the 2 and 4 core options thanks to the design of AMD’s CPU modules.

| CPU: | Intel Core i7-4960X @ 4.2GHz |

| Motherboard: | ASRock Fatal1ty X79 Professional |

| Power Supply: | Corsair AX1200i |

| Hard Disk: | Samsung SSD 840 EVO (750GB) |

| Memory: | G.Skill RipjawZ DDR3-1866 4 x 8GB (9-10-9-26) |

| Case: | NZXT Phantom 630 Windowed Edition |

| Monitor: | Asus PQ321 |

| Video Cards: | AMD Radeon R9 290X AMD Radeon R9 285 AMD Radeon R7 260X NVIDIA GeForce GTX 980 NVIDIA GeForce GTX 750 Ti NVIDIA GeForce GTX 680 |

| Video Drivers: | NVIDIA Release 349.56 Beta AMD Catalyst 15.200 Beta |

| OS: | Windows 10 Technical Preview 2 (Build 9926) |

Finally, while we’re going to take a systematic look at DirectX 12 from both a CPU standpoint and a GPU standpoint, we may as well answer the first question on everyone’s mind: does DirectX 12 work as advertised? The short answer: a resounding yes.

245 Comments

View All Comments

lilmoe - Friday, February 6, 2015 - link

These tests should totally NOT be done on a Core i7....tipoo - Friday, February 6, 2015 - link

He also has an i5 and i3 in there...?Gigaplex - Sunday, February 8, 2015 - link

He has an i7 with some cores disabled to simulate i5 and i3.ColdSnowden - Friday, February 6, 2015 - link

Why do AMD radeons have a much slower batch submission time? Does that mean that using an Nvidia card with a faster batch submission time can lessen cpu bottlenecking, even if DX11 is held constant?junky77 - Friday, February 6, 2015 - link

Also, what about testing with AMD APUs and/or CPUs.. this could make a change in this areaWaltC - Friday, February 6, 2015 - link

Very good write-up! My own thought about Mantle is that AMD pushed it to light a fire under Microsoft and get the company stimulated again as to D3d and the Windows desktop--among other things. Prior to AMD's Mantle announcement it wasn't certain if Microsoft was ever going to do another version of D3d--the scuttlebutt was "No" from some quarters, as hard as that was to believe. At any rate, as a stimulus it seems to have worked as a couple of months after the Mantle announcement Microsoft announces D3d/DX12, the description of which sounded suspiciously like Mantle. I think that as D3d moves ahead and continues to develop that Mantle will sort of hit the back burners @ AMD and then, like an old soldier, just fade away...;) Microsoft needs to invest heavily in DX/D3d development in the direction they are moving now, and I think as the tablet fad continues to wane and desktops continue to rebound that Microsoft is unlikely to forget its core strengths again--which means robust development for D3d for many years to come. Maximizing hardware efficiencies is not just great for lower-end PC gpus & cpus, it's also great for xBone & Microsoft's continued push into mobile devices. Looks like clear sailing ahead...;)Ryan Smith - Saturday, February 7, 2015 - link

"Prior to AMD's Mantle announcement it wasn't certain if Microsoft was ever going to do another version of D3d"Although I don't have access to the specific timelines, I would strongly advice not conflating the public API announcements with development milestones.

Mike Mantor (AMD) and Max McMullen (MS) may not go out and get microbrews together, but all of the major players in this industry are on roughly the same page. DX12 would have been in the drawing board before the public announcement of Mantle. Though I couldn't say which API was on the drawing board first.

at80eighty - Friday, February 6, 2015 - link

Great article. Loved the "first thoughts" endingHisDivineOrder - Friday, February 6, 2015 - link

I have to say, I expected Mantle to do a LOT better for AMD than DX12 would simply because it would be more closely tailored to the architecture. I mean, that's what AMD's been saying forever and a day now. It just doesn't look true, though.Perhaps it's AMD sucks at optimization because Mantle looks as optimized for AMD architecture as DX11 is in comparison to their overall gains. Meanwhile, nVidia looks like they've been using all this time waiting on DX12 to show up to really hone their drivers to fighting shape.

I guess this is what happens when you fire large numbers of your employees without doing much about the people directing them whose mistakes are the most reflected by flagging sales. Then you go and hire a few big names, trumpet those names, but you don't have the "little people" to back them up and do incredible feats.

Then again, it probably has to do with the fact that nVidia's released a whole new generation of product while AMD's still using the same product from two years ago that they've stretched thin across multiple quarters via delayed releases and LOTS of rebrands and rebundling.

Jumangi - Saturday, February 7, 2015 - link

No it was because Mantle only worked on AMD cards and NVidia has about 2/3 of the discrete graphics card market so most developers never bothered. Mantle never had a chance for widespread adoption.