A Look At Triple-GPU Performance And Multi-GPU Scaling, Part 1

by Ryan Smith on April 3, 2011 7:00 AM ESTIt’s been quite a while since we’ve looked at triple-GPU CrossFire and SLI performance – or for that matter looking at GPU scaling in-depth. While NVIDIA in particular likes to promote multi-GPU configurations as a price-practical upgrade path, such configurations are still almost always the domain of the high-end gamer. At $700 we have the recently launched GeForce GTX 590 and Radeon HD 6990, dual-GPU cards whose existence is hedged on how well games will scale across multiple GPUs. Beyond that we move into the truly exotic: triple-GPU configurations using three single-GPU cards, and quad-GPU configurations using a pair of the aforementioned dual-GPU cards. If you have the money, NVIDIA and AMD will gladly sell you upwards of $1500 in video cards to maximize your gaming performance.

These days multi-GPU scaling is a given – at least to some extent. Below the price of a single high-end card our recommendation is always going to be to get a bigger card before you get more cards, as multi-GPU scaling is rarely perfect and with equally cutting-edge games there’s often a lag between a game’s release and when a driver profile is released to enable multi-GPU scaling. Once we’re looking at the Radeon HD 6900 series or GF110-based GeForce GTX 500 series though, going faster is no longer an option, and thus we have to look at going wider.

Today we’re going to be looking at the state of GPU scaling for dual-GPU and triple-GPU configurations. While we accept that multi-GPU scaling will rarely (if ever) hit 100%, just how much performance are you getting out of that 2nd or 3rd GPU versus how much money you’ve put into it? That’s the question we’re going to try to answer today.

From the perspective of a GPU review, we find ourselves in an interesting situation in the high-end market right now. AMD and NVIDIA just finished their major pushes for this high-end generation, but the CPU market is not in sync. In January Intel launched their next-generation Sandy Bridge architecture, but unlike the past launches of Nehalem and Conroe, the high-end market has been initially passed over. For $330 we can get a Core i7 2600K and crank it up to 4GHz or more, but what we get to pair it with is lacking.

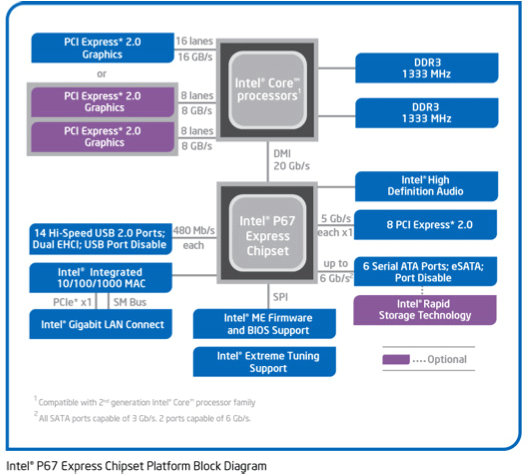

Sandy Bridge only supports a single PCIe x16 link coming from the CPU – an awesome CPU is being held back by a limited amount of off-chip connectivity; DMI and a single PCIe x16 link. For two GPUs we can split that out to x8 and x8 which shouldn’t be too bad. But what about three GPUs? With PCIe bridges we can mitigate the issue some by allowing the GPUs to talk to each other at x16 speeds and dynamically allocate CPU-to-GPU bandwidth based on need, but at the end of the day we’re splitting a single x16 lane across three GPUs.

The alternative is to take a step back and work with Nehalem and the x58 chipset. Here we have 32 PCIe lanes to work with, doubling the amount of CPU-to-GPU bandwidth, but the tradeoff is the CPU. Gulftown and Nehalm are capable chips on its own, but per-clock the Nehalem architecture is normally slower than Sandy Bridge, and neither chip can clock quite as high on average. Gulftown does offer more cores – 6 versus 4 – but very few games are held back by the number of cores. Instead the ideal configuration is to maximize performance of a few cores.

Later this year Sandy Bridge E will correct this by offering a Sandy Bridge platform with more memory channels, more PCIe lanes, and more cores; the best of both worlds. Until then it comes down to choosing from one of two platforms: a faster CPU or more PCIe bandwidth. For dual-GPU configurations this should be an easy choice, but for triple-GPU configurations it’s not quite as clear cut. For now we’re going to be looking at the latter by testing on our trusty Nehalem + x58 testbed, which largely eliminates a bandwidth bottleneck in a tradeoff for a CPU bottleneck.

Moving on, today we’ll be looking at multi-GPU performance under dual-GPU and triple-GPU configurations; quad-GPU will have to wait. Normally we only have two reference-style cards of any product on hand, so we’d like to thank Zotac and PowerColor for providing a reference-style GTX 580 and Radeon HD 6970 respectively.

97 Comments

View All Comments

marc1000 - Monday, April 4, 2011 - link

Ryan, if at all possible, please include a reference card for the "low-point" of performance. We rarely see good tests with mainstream cards, only the top tier ones.So if you can, please include a radeon-5770 or GTX460 - 2 of these cards should have the same performance as one of the big ones, so it would be nice to see how well they work by now.

Ryan Smith - Wednesday, April 6, 2011 - link

These charts were specifically cut short as the focus was on multi-GPU configurations, and so that I could fit more charts on a page. The tests are the same tests we always run, so Bench or a recent article ( http://www.anandtech.com/show/4260/amds-radeon-hd-... ) is always your best buddy.Arbie - Monday, April 4, 2011 - link

Looking at your results, it seems that at least 99.9% of gaming enthusiasts would need nothing more than a single HD 6970. Never mind the wider population of PC-centric folk who read Anandtech.More importantly, this isn't going to change for several years. PC game graphics are now bounded by console capabilities, and those advance only glacially. In general, gamers with an HD 6850 (not a typo) or better will have no compelling reason to upgrade until around 2014! I'm very sad to say that, but think it's true.

Of course there is some technical interest in how many more FPS this or that competing architecture can manage, but most of that is a holdover from previous years when these things actually mattered on your desktop. I'm not going to spend $900 to pack two giant cooling and noise problems into my PC for no perceptible benefit. Nor will anyone else, statistically speaking.

The harm in producing such reports is that it spreads the idea that these multi-board configurations still matter. So every high-end motherboard that I consider for my next build packs in slots for two or even three graphics boards, and an NF-200 chip to make sure that third card (!) gets enough bandwidth. The mobos are bigger, hotter, and more expensive than they need to be, and often leave out stuff I would much rather have. Look at the Gigabyte P67A-UD7, for example. Full accommodation for pointless graphics overkill (praised in reviews), but *no* chassis fan controls (too mundane for reviewers to mention).

I'd rather see Anandtech spend time on detailed high-end motherboard comparisons (eg. Asus Maximus IV vs. others) and components that can actually improve my enthusiast PC experience. Sadly, right now that seems to be limited to SSDs and you already try hard on those. Are we reduced to... fan controllers?

Thanks,

Arbie

erple2 - Tuesday, April 5, 2011 - link

There are still several games that are not Console Ports (or destined to be ported to a console) that are still interesting to read about and subsequently benchmark. People will continue to complain that PC Gaming has been a steady stream of Console Ports, just like they have been since the PSX came out in late '95. The reality is that PC Gaming isn't dead, and probably won't die for a long while. While it may be true that EA and company generate most of their revenue from lame console rehash after lame console rehash, and therefore focus almost single-mindedly on that endeavor, there are plenty of other game publishers that aren't following that trend, thereby continuing to make PC Gaming relevant.The last several tests I've seen of Motherboard reviews has more or less convinced me that they just don't matter at all any more. Most (if not all) motherboards of a given chipset don't offer anything performance wise over other competing motherboards.

There are nice features here and there (Additional Fan Headers, more USB ports, more SATA Ports), but on the whole, there's nothing significant to differentiate one Motherboard from another, at least from a performance perspective.

789427 - Monday, April 4, 2011 - link

I would have thought that someone would pay attention to if throttling was occurring on any of the cards due to thermal overload.The reason is that due to differences in ventilation in the case, layout and physical card package, you'll have throttling at different times.

e.g. if the room was at a stinking hot 50C, the more aggressive the throttling,the greater the disadvantage to the card.

Conversely, operating the cards at -5C would provide a huge advantage to the card with the worst heat/fan efficiency ratio.

cb

TareX - Monday, April 4, 2011 - link

I'm starting to think it's really getting less and less compelling to be a PC gamer, with all the good games coming out for consoles exclusively.Thank goodness for Arkham Asylum.

Golgatha - Monday, April 4, 2011 - link

I'd like to see some power, heat, and PPD numbers for running Folding@Home on all these GPUs.Ryan Smith - Monday, April 4, 2011 - link

The last time I checked, F@H did not having a modern Radeon client. If they did we'd be using it much more frequently.karndog - Monday, April 4, 2011 - link

Cmon man, you have an enthusiast rig with $1000 worth of video cards yet you use a stock i7 at 3.3ghz??"As we normally turn to Crysis as our first benchmark it ends up being quite amusing when we have a rather exact tie on our hands."

Ummm probably because your CPU limited! Update to even a 2500k at 4.5ghz and i bet you'll see the Crossfire setup pull away from the SLI.

karndog - Monday, April 4, 2011 - link

Not trying to make fun of your test rig, if that's all you have access too. Im just saying that people who are thinking about buying the Tri SLI / Xfire video card setups reviewed here arent running their CPU at stock clock speeds, especially such low ones, which skew the results shown here.