Out of Order Loads done right

Since the Pentium Pro, x86 CPUs have been capable of issuing and executing instructions out of order. However, on average one third of the instructions in those reorder buffers could not be reordered easily: we are talking about loads. Moving loads forward can give a very big boost to performance. Instead of loading a piece of data when you need it, it is much more useful to start the load as early as you can. That way, L1 and even L2-cache latencies can be much more easily hidden.This is pretty easy to understand. Imagine having an ALU operation that needs a certain piece of data but that the data is not available in the L1-cache. If the load has been executed many cycles before the ALU operation needs that piece of data, the L2-cache latency is going to have a reduced impact. Of course, you don't want to load a value which is being or will be written to by a previous - following the program/thread order - store. That would mean you are loading an old value, not the up to date one. Check out the picture below.

Load 2 cannot be moved forward, since it has to wait until the first Store is done. Only after Store 1 is done will variable Y have its correct value. However there is no reason why Load 4 cannot move forward. It doesn't have to wait for Store 3 and store 1 to finish. By moving Load 4 forward, you give the load unit more time to get the right operand, as we assume that after load 4 a calculation with operand Y will happen.

Currently, CPUs will generally delay load 4 when a store is in flight (active). The problem is the address to which the stores will write has yet to be calculated. To be more precise, the memory addresses are still unknown during reordering and scheduling. When a Load micro-op enters the ROB, the memory addresses of previous stores (from the program order) are not known until they pass the AGU (Address Generation Units).

However, the risk that a load will load a value out of an address that is being written to by a store that has yet to be finished is pretty small (1-2%). That is why Jack Doweck of the Core development team decided to allow Loads to go ahead of previous stores, assuming that the load will not be loading information that will be updated by that preceding store. To avoid that the assumption was wrong, a predictor is used to help. The dynamic alias predictor tries to predict whether or not a previous store will write to the same address as the address from which the load - that you want to execute earlier, thus out of order - will load its data.

Based on Jack Doweck's comments and a study of Intel's previous P6 and P-M architectures I drew up the scheme below. Be warned that this is not the official Intel diagram.

The predictor gives the ReOrder Buffer (ROB) the permission to move a load ahead of a store or not. After the Load has been moved ahead and executed, the conflict logic scans the store buffer located in the Memory reOrder Buffer (MOB) to see if any of the stores which were located before the load (following program order) have written to the address of the out of order load. If so, the load must be redone, and the misprediction penalty is about 20 lost cycles. (Note that the branch misprediction penalty is also about 20 cycles). Worst case, the new dynamic alias predictor may slightly reduce performance, but realistically it's four steps forward, one step back, resulting in a net performance boost.

Determining whether a load and a store share the same address is called memory disambiguation. Allowing loads to move ahead of stores gives a big performance boost. In some snippets of benchmarking code, Intel saw up to a 40% performance boost, solely the result of the more flexible way Loads get reordered. It is pretty clear that we won't see this in most real applications, but it is nevertheless impressive and it should show tangible (10-20%) performance boosts together with the fast L2 and L1 cache.

Let us not forget that loads are probably the most important instructions of all. Not only are loads about one third of the micro-ops that are in flight in a x86 CPU, but they can also cause costly stalls when a load needs to go to the L2 cache (or worse, system memory). So how does this super flexible reordering of loads compare with other architectures?

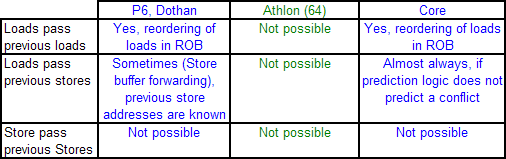

The P6 and P-M could already reorder Loads pretty good. They could move one Load before other Loads, as well as before Stores which have no unknown addresses or addresses which do not reference the same address as the load. In contrast, the Athlon 64 can only move loads before independent ALU operations (ADD etc.). Loads cannot be moved ahead much at all to minimize the effect of a cache miss, and other loads cannot be used to keep the CPU busy if a load has to wait for a store to finish. This means that the Athlon 64 processor is severely limited when it comes to reorder code.

This is probably one of the most important reasons why the Athlon 64 does not outperform the P-M in gaming and integer workloads despite having a lower latency memory system and more integer execution sources. Integer workloads tend to jump around in memory, and have many unknown addresses which must be calculated first. It is less important for FP intensive loads, which is also one of the reasons why the Athlon 64 had no problem with Dothan in this kind of workload. FP workloads access the memory in a much more regular fashion.

Once Loads and Stores are in the queues of Load/Store units, the Athlon's L/S unit allows Loads to bypass Stores, except of course when the load would bypass a store to the same address. Unfortunately, by then the Loads are already out of the ICU and cannot be used to fill the holes that dependencies and cache misses make. You could say that the Athlon (64) has some Load/Store reordering but it's much later in the pipeline and is less flexible than the P6, P-M, and Core architectures.

87 Comments

View All Comments

JohanAnandtech - Monday, May 1, 2006 - link

Thanks.The current Core Papers are pretty poor IMHO.

Best sources of info:

- Jack Doweck

- IDF's presentations

- David Kanter's article at RWT (going to add that link in the references)

Orbs - Monday, May 1, 2006 - link

I never studied chip design but even with my limited assembly knowledge I was able to follow this article. Very technical, very informative, and helps explain some of the insane benchmark results from Conroe's preview earlier this year.It will be interesting to see how Conroe/Merom perform when finalized, how they compare to final AM2 CPUs (especially higher clocked AM2 CPUs) and what AMD counters with in '07.

JohanAnandtech - Monday, May 1, 2006 - link

"I never studied chip design but even with my limited assembly knowledge I was able to follow this article"Very happy to read that.

At this point there is not enough info on what AMD has in store, so I left that out. However, better load reordering (besides the announced extra FP power) seems a minimum for the next AMD micro-arch.

at80eighty - Monday, May 1, 2006 - link

Been a while since we've seen you around Johan - nice read btwAvalon - Monday, May 1, 2006 - link

Wow, very nice read. Thanks a ton!owned66 - Monday, February 25, 2013 - link

soo many AMD fan boys back thenhere is 2013 they dont exist anymore

lollichop - Sunday, February 26, 2017 - link

Fast forward 11 years. It's 2017. After a big decline, AMD is back in the game with Ryzen.