Intel Kaby Lake-G GPU Driver Updates Left In Limbo, Currently Unsupported

by Ryan Smith on June 8, 2020 12:00 PM EST

While the retail shelf life of Intel’s unusual Kaby Lake-G processor has pretty much passed at this point, it looks like it has become the gift that keeps on giving when it comes to confusion about how support for the combined Intel/AMD chip will work. First spotted by Tom’s Hardware, AMD’s latest driver Radeon driver installer doesn’t include support for the chip’s AMD “Vega M” GPU, and as a result there are currently no up-to-date drivers available for the platform. And while Tom’s Hardware did get a cryptic-but-promising response from AMD about future driver support, for the moment it’s not clear what’s going to happen or how long-term driver support for the processor will work.

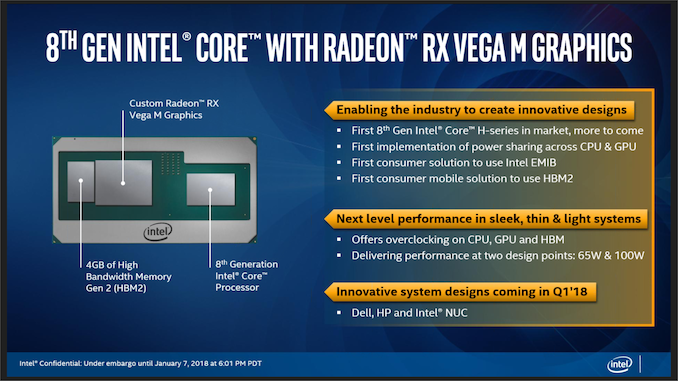

A one-off collaboration between Intel and AMD, Intel’s Kaby Lake-G processor combined a quad-core Intel Kaby Lake CPU with a discrete AMD Polaris-based GPU, all on a single package. With the AMD dGPU covering for Intel’s traditionally weak integrated graphics, Kaby Lake-G gave Intel and interesting chip that could deliver great compute performance and much stronger graphics performance as well.

However since the GPU portion of Kaby Lake-G came from outside Intel, the chip has always existed in an odd place where it’s never been fully embraced by either manufacturer. Even as an Intel-sold and Intel-branded product, Kaby Lake-G’s Radeon roots were never really hidden, and indeed the chip’s GPU drivers have clearly been a derivative of AMD’s standard driver set since the very beginning. But this has also meant that Intel has been reliant on AMD to provide those drivers, and for reasons that are not entirely public or transparent, this hasn’t been handled well. After a very long break between GPU driver updates, Intel essentially gave up on putting any kind of façade on the source of their GPU drivers, and began directing users to install AMD’s Radeon drivers, which at the same time gained official support for the chip.

And that was the end of that. Or so we thought.

Instead, as spotted by the Tom’s Hardware crew, Kaby Lake-G support has once again gone missing from AMD’s drivers. As a result, it’s not possible to install current drivers for the hardware – and even finding drivers that can be installed is a bit of an easter egg hunt.

When they reached out to Intel about the matter – and specifically, about updated drivers for Hades Canyon, Intel’s Kaby Lake-G based NUC – Tom’s Hardware did get a promising, but nonetheless cryptic response from the chipmaker:

And for the moment, this is where things stand, with no official explanation as to what’s going on. Driver support for Kaby Lake-G hangs in limbo, as Intel and AMD seem to be unable to sort out responsibility for the chip.

Joint projects like these are some of the most difficult in the industry, as having multiple vendors involved in a single product means that there’s some degree of cooperation required. Which is easier said than done when it involves historic rivals like Intel and AMD. Still, I would have expected that driver support is something that would have been hammered out in a contract early on – such that AMD was committed to deliver and paid for the necessary 5 years of drivers – rather than the current situation of Intel and AMD seemingly dancing around the issue.

In the meantime, here’s to hoping that Kaby Lake-G’s driver situation gets a happier ending in due time.

Source: Tom's Hardware

46 Comments

View All Comments

Gigaplex - Monday, June 8, 2020 - link

Their console support shows they can be a reliable partner.Deicidium369 - Monday, June 8, 2020 - link

Easy to get a contract no one else is bidding on...Samus - Tuesday, June 9, 2020 - link

LOL, and how do YOU know other parties are not bidding on console design wins? Are you seriously implying nVidia wouldn't be interested in selling tens of millions of GPU's to Sony\Microsoft? Clearly they are interested in the industry: look at the Switch.CiccioB - Tuesday, June 9, 2020 - link

Depends on the margin MS and Sony want to pay.At the time when AMD won all console supply, Nvidia said that the margins were so low that they didn't want to invest so many engineers for that purpose.

Yet, as we have seen, console SoC supply is something that has a value beyond the simple money they make. AMD gained the support and optimization for its own architectures while Nvidia more advances features were left behind.

Santoval - Tuesday, June 9, 2020 - link

Sony and Microsoft have long switched to semi-custom APUs (no distinct GPU and CPU). Therefore, since Nvidia lack a x86-64 licence, the only way they could bid to make the APU for them is if it was ARM or RISC-V based. Neither of them appears to have any wish to switch to a new ISA, hence Nvidia cannot bid to make the APU for them. Nintendo is different bird.CiccioB - Tuesday, June 9, 2020 - link

They didn't switch for any reason but costs and the fact that AMD sold them for a penny.Technically speaking separate CPU and GPU are better but costs more.

There's not chance to match the low prices AMD made with their SoC with a discrete combination even thought the latter would be much faster (and easier to cool down).

AMD simply killed the competition by price. Something Intel nor Nvidia would like to fight against just for having their devices in the consoles for a penny each.

But as said, while for Intel is not a problem (not a gain, not a loss) for Nvidia the problem is that they have lost the support and optimization for their own architecture.

I wish they would supply just one of the two, just to see their advance features supported and see who is really slowing down the gaming evolution with antiquate and obsolete architectures that catch the competition 3 years later.

This would really create a competition between the two.

sing_electric - Tuesday, June 9, 2020 - link

Isn't that a bit chicken-and-egg, though? Imagine a world without AMD (or where AMD went out of business, or where AMD survived but didn't buy ATI, etc.) - it's not like Sony and MS would have just ¯\_(ヅ)_/¯ and stopped making consoles - they'd have chosen something else. Possibly an arch like the original Xbox (which is basically... a PC), possibly have made it worth Nvidia's while to soup up Tegra... custom ARM chip, possibly with a dGPU, or who knows what else.The time between generations helps a lot in that regard - there's plenty of ARM chips out there that may not be as fast as a Zen 3 design, but would still give a pretty big performance boost over the 2013 Bulldozer-based chips in previous gen consoles.

lmcd - Tuesday, June 9, 2020 - link

Ironically Microsoft's ARM on Windows work could enable a future switch.Samus - Tuesday, June 9, 2020 - link

As amazing as AMD's resiliency in the industry is, from engineering feats to design wins and platform support, their drivers (with a heavy emphasis on the ATi roots here) have always been a weak spot.Yet part of me agrees with you. It's very, VERY strange for AMD to prioritize such niche developments such as Mac GPU support and even Linux support over a promising partnership with Intel. And that appears to be exactly the case.

sing_electric - Tuesday, June 9, 2020 - link

I don't think EITHER AMD or Intel thought that Kaby Lake-G was going to be the start of anything long-term. By the time it came out, Intel had to have internally been planning what eventually became Xe, and AMD made no secret of the fact that they were gunning for Intel in as many x86 markets as possible.What *will* be interesting is what becomes of Nvidia using Epyc CPUs in its Ampere-based DGX server. Nvidia seems to have little interest in making an ARM processor for those systems, and with Intel releasing Xe, that means one way or another, Nvidia will have to rely on a CPU vendor with a competing graphics product if it wants to stick with x86....