Intel to Create new 8th Generation CPUs with AMD Radeon Graphics with HBM2 using EMIB

by Ian Cutress on November 6, 2017 9:43 AM EST

Today we have an announcement out of left field. Intel has formally revealed it has been working on a new series of processors that combine its high-performance x86 cores with AMD Radeon Graphics into the same processor package using Intel’s own EMIB multi-die technology. If that wasn’t enough, Intel also announced that it is bundling the design with the latest high-bandwidth memory, HBM2.

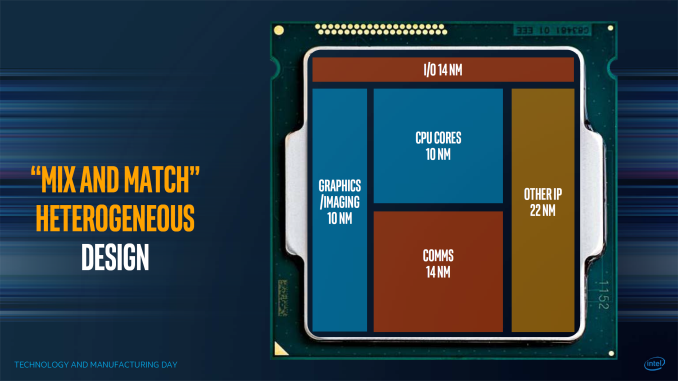

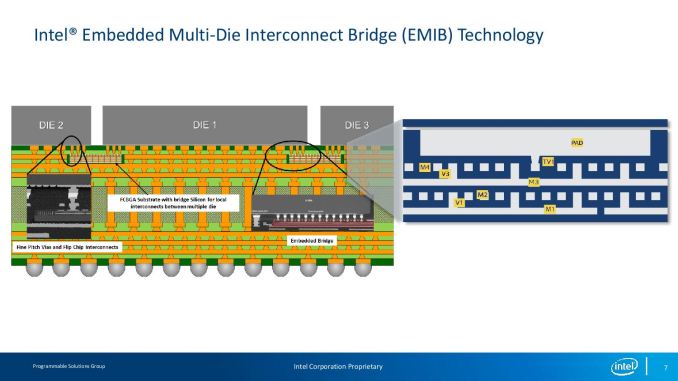

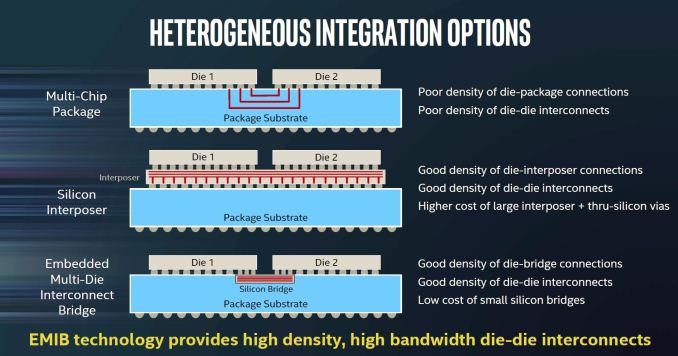

Intel announced its EMIB technology over the last twelve months, with the core theme being the ability to put multiple and different silicon dies onto the same package at a much higher bandwidth than a standard multi-chip package but at a much lower cost than using a silicon interposer. At Intel’s Manufacturing Day earlier this year, they even produced a slide (above) showcasing what might be possible: a processor package with the x86 cores made on one technology, the graphics made in another, perhaps different IO and memory or wireless technologies too. With EMIB, processor design can become a large game of Lego.

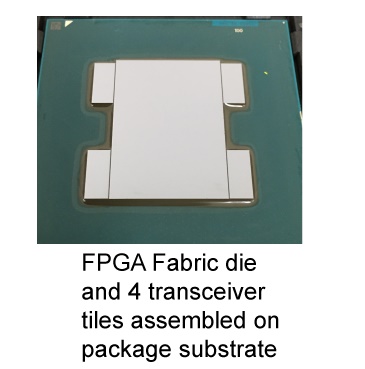

EMIB came to market with the latest Intel Altera FPGAs. By embedding the EMIB required silicon design into the main FPGA and each of the chipsets, the goal was to add multiple memory blocks as well as data transfer blocks in a mix and match scenario, allowing large customers to have the design tailored to what they require. The benefits of EMIB were clear, without the drawbacks of standard MCP design or the cost of interposers: it would also allow a design to go beyond the monolithic reticle limit of standard lithography processes. It was always expected that EMIB would have to find its way into the general processor market, as we start to see high-end server offerings approaching 900 mm2 over multiple silicon dies in a single package.

Since the EMIB announcements, Intel’s Manufacturing Day, and Hot Chips, word has been circulating about how Intel is going to approach this from a consumer standpoint. As part of the requirements of Intel’s own integrated graphics solutions, a 2011 cross-licensing deal with NVIDIA was in place – this deal was set to expire from April 1st 2017, and no mention of extending that deal was ever made public. A couple of rumors floated around that Intel were set to make a deal with AMD instead, as despite their x86 rivalry they were a preferred partner in these matters. Numerous outlets with connections in both AMD and Intel had difficulty prizing any information out. Historically Intel refuses to comment on such matters in advance. Other potential leaks include published benchmarks over at SiSoft, although nothing has been made concrete until today.

Intel’s official statements on the announcement offer a few details worth diving into.

The new product, which will be part of our 8th Gen Intel Core family, brings together our high-performing Intel Core H-series processor, second generation High Bandwidth Memory (HBM2) and a custom-to-Intel third-party discrete graphics chip from AMD’s Radeon Technologies Group* – all in a single processor package.

Intel interestingly uses a singular word for ‘product’, although this does not indicate if it is a family or literally a single SKU in the works. On Intel’s Core-H series processors, these are currently Kaby Lake based running at 45W, with Intel’s integrated GT2 graphics. It would be interesting to see if the graphics of the Core-H are then stripped out as a new silicon design, or if they are re-spinning the full Core-H silicon as a result and just displaying the integrated cores, or are able to run both graphics segments independently (it is likely a new spin of silicon, if I were a betting man). The use of HBM2 is not unsuprirising – Intel has successfully integrated HBM2 into its Altera EMIB-based products so we would suspect that this is not going to be overly difficult.

The next bit is the interesting one: ‘custom-to-Intel … discrete graphics chip’ from AMD RTG. This means that none of AMD’s current product stack has silicon dedicated to EMIB, but AMD is going to leverage its semi-custom design to provide graphics chiplets for Intel to add to its silicon.

‘In close collaboration, we designed a new semi-custom graphics chip, which means this is also a great example of how we can compete and work together, ultimately delivering innovation that is good for consumers… Similarly, the power sharing framework is a new connection tailor-made by Intel among the processor, discrete graphics chip and dedicated graphics memory. We’ve added unique software drivers and interfaces to this semi-custom discrete GPU that coordinate information among all three elements of the platform.’

One of the questions about running multiple chips in a single package is how to manage all the bandwidth and power. AMD has recently solved that issue in its server processors and inside its APUs by using their Infinity Fabric, which if I were to guess would not be under the purview of this collaboration. It states that with collaboration that the chip shares a power framework, which will be an interesting deep dive when we get information as to whether Intel offering separate power rails for the CPU and GPU segments, using an integrated voltage regulator (like Broadwell did), or doing something similar to AMD by using a unified power rail sharing mechanism with digital LDOs as was announced with Ryzen Mobile only a couple of weeks ago.

‘Look for more to come in the first quarter of 2018, including systems from major OEMs based on this exciting new technology.’

It looks like Intel is ready to make some announcements over the next few months on this project, and CES is just around the corner in January.

Though taking a step back, we have to consider what this means and what market Intel is aiming for. AMD recently launched (with products coming soon) their Ryzen Mobile platform, designed with quad-core Zen and up to 10 CUs of Vega graphics. The announcements from Intel and AMD do not state what graphics core they are using (they could be one generation behind for competitive reasons?) however it does state that they are using Core-H series processors, which are typically in the 45W range. AMD currently hasn’t announced anything in that segment, and deciding to focus Ryzen Mobile at the thin and ultralight notebook categories first. If AMD does bring Ryzen Mobile up to more powerful devices, then this new product will be in direct competition.

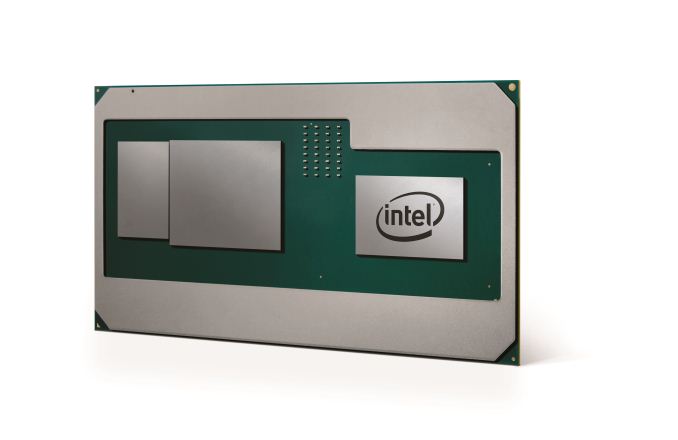

Looking at the image provided by Intel on the new product arrangement actually adds a new question or two to the bucket list. Here we have an Intel chip on the right, the AMD custom graphics in the middle, and the HBM2 chip next to it. The Intel chip is a long way away from the AMD chip, which would suggest that these two are not connected via EMIB if the mockup was accurate. The close proximity of the big chip in the middle to what looks like a HBM2 stack does suggest that it is connected via EMIB, as given by how close the chips in the Altera products are:

EMIB is being used, but it does not look like it is being used for all the chips together. It’s worth noting that neither Intel nor AMD offered pre-briefings on this announcement, so there are a lot of unanswered questions hanging around as a result.

A final thought. Apple uses a lot of Intel's 45W processors for iMacs; offering AMD graphics (Apple's preferred pro-graphics partner) into the segment that previously Intel's Crystalwell/eDRAM based products exist might be the next step on that product cycle evolution.

Source: Intel

Source: AMD

More Commentary

After an hour or two to digest, we have some new thoughts.

Firstly, judging by the wording and Intel's launch video, it can basically be confirmed that EMIB is only being used between the GPU and the HBM2. The distance between the CPU and GPU is too far for EMIB, so is likely just PCIe through the package which is a mature implementation. This configuration might also help with power dissipation if the chips are further apart.

The agreement between AMD and Intel is that Intel is buying chips from AMD, and AMD is providing a driver support package like they do with consoles. There is no cross-licensing of IP going on: Intel likely provided AMD with the IP to make the EMIB chipset connections for the graphics but that IP is only valid in the designs that AMD is selling to Intel (it's a semi-custom foundry business, these agreements are part of the job).

With Intel buying chips from AMD, it stands to reason they could be buying more than one configuration, depending on how Intel wanted to arrange the product stack. Intel could pair a smaller 10 CU design with a dual core, and a bigger 20+ CU design with a quad-core mobile processor. A couple of benchmark sources seem to believe that there is at least two configurations in Polaris-like configurations, with up to 24 CUs in the high-end model. We will obviously wait before confirming this, as Polaris is not originally built for HBM2 memory. Normally with HBM2 it requires a GPU that is designed to be fed by HBM - data management is a key operation. However, if it works 'naturally', then it should be a case of attaching the HBM2 controller IP to the GPU and away you go.

In an ideal world, it would make sense for AMD to sell Intel their Polaris designs, and for their own products say at least one generation ahead. With AMD's financial success of late, they could be in a position to do this, or Intel might be offering top dollar for the latest design. Neither company have commented on the arrangement between the two companies yet other than their press releases.

In discussions with Peter Bright from Ars Technica, we have concluded that it is likely for the Intel GPU to still keep its own integrated graphics, and the system could act in a switching graphics arrangement. This would be easy if the CPU and GPU are connected via PCIe, as all the mechanisms are in place. With the Intel integrated GPU already there, video playback would be accelerated and kept on die then sent to the display controller - it would allow the GPU and the HBM2 to power down, saving energy. If the GPU and HBM2 were kept powered up, then we would see reductions in battery life for future devices.

It has been discussed if this is a play just for Apple, given that Apple was behind Intel implementing eDRAM on its Crystalwell processors, and the latest generation of Crystalwell parts seem to be in Apple iMacs almost exclusively. That being said, Intel has stated that they have multiple partners interested in the design, and we should expect more information with devices in Q1. With Intel saying 'devices', it stands to reason that there are various OEMs waiting to work with the hardware.

As for the types of devices that we will be seeing, this one is a little confusing. Intel quoted Core-H series CPUs, which are 35W/45W parts. This also gels with comments saying that these new parts and Ryzen Mobile would not be in direct competition. However, in the demo video provided, it is clear that the potential for this design to go into thin and light notebooks like 2-in-1s and ultra-portables is on Intel's mind. Does that mean Intel is targeting 15W? Well if Intel is buying multiple configurations of chips from AMD, then strapping a dual-core i5 to 10 CU graphics part is more than plausible. If AMD is selling Intel the older Polaris design, the AMD has that advantage at least.

252 Comments

View All Comments

boozed - Monday, November 6, 2017 - link

The DEC technology that AMD used was the Alpha's DDR memory bus. HT was developed by a consortium of which AMD was a member.IGTrading - Tuesday, November 7, 2017 - link

AMD founded the HT Consortium to gain industry support for HyperTransport which it invented.AMD is also the President of the HT Consortium.

To say HyperTransport was developed by the HT Consortium and AMD was "a member" is plain wrong.

AMD invented, used sucessfuly and popularized HyperTransport and THEN founded the HT Consortium.

Guys, Google is free to use. :)

#allweneedislove and to #readmore

Morawka - Tuesday, November 7, 2017 - link

AMD did not invent HBM nor HBM2. Intel actually owns and makes HBM2 through Micron. AMD buys it from Micron among others.boozed - Monday, November 6, 2017 - link

Itanic was designed to compete outside the x86 arena and was a flawed concept from the start. It would have died just as quickly even in Opteron's absence, IMO.ABR - Tuesday, November 7, 2017 - link

Opteron (and Intel's ensuing copy) defined the entire market direction that has reduced *all* non-x86 CPUs to a small niche.ABR - Tuesday, November 7, 2017 - link

Server CPUs, that is. :-)mapesdhs - Tuesday, November 7, 2017 - link

It died because it was incredibly late and the first version was poor, not because it was objectively a bad idea. Had it come out on time, with the performance of Itanium2, nobody would be complaining.Cliff34 - Monday, November 6, 2017 - link

The proof of the pudding is in the eating. I ain't bashing AMD but until they come out with a solid CPU for laptop market, it is hard to say they are competing in this arena at all. I do hope that their next generation of CPU and their platform for laptops can compete with Intel. But until there is proof, for laptops I am sticking with Intel.blppt - Tuesday, November 7, 2017 - link

They already have launched Ryzen+Vega APU for notebooks. Availability seems scarce at the moment though.ZeDestructor - Tuesday, November 7, 2017 - link

HyperTransport isn't all that special.. fast, low-latency, serial, cache-coherent interconnect, just like InifiniBand and any number of supercomputer links, which are even older. Hell, on it's own, HyperTransport isn't very useful - it's only when you bring in the NUMA that it starts mattering. Intel didn't go NUMA till the Nehalem generation, at which point they brought out QPI, which looks the same because it solves the same problems as what HT solves.In terms of ISA extensions (x86_64 is just an ISA extension on top of x86), Intel has been by far the most prolific, with MMX, SSE1-4, AVX, FMA, AES-NI, TSX-NI and so on. Meanwhile AMD has 3DNow! (which nobody really used since it was basically MMX+), SSE4A (which nobody really uses, to the point where AMD are deprecating it), AMD-V/IOMMU (same thing as VT-x and VT-d respectively), SVM (super new, so no wide usage yet)

Inifinity Fabric isn't all that special either - cache coherent interconnect on PCIe.. I wonder where I saw that first.. oh in CAPI. Intel went the UPI route instead, which is somewhat less flexible (can't remap UPI links to PCIe), but should work just as well. Internal to the CPU, Intel uses router-based meshes rather than rings, which while not as good as rings (that AMD uses) currently, should get better as core counts go ever higher.

Intel and Micron have been working on HMC for about as long as AMD and SK Hynix have been working on HBM, and have shipped at around the same times. Alongside that they've also been working on Optane (which I suspect will be much better in NVDIMM form than NVMe) and EMIB.

Mantle is mostly DICE's work, not AMD's, and as awesomegamer919 pointed out, is now Vulkan. DX12 and Metal are completely independent projects. I'll give AMD credit for supporting DICE and advertising the hell out of it though, cause that kicked MS into building DX12.

TSV is most certainly not an AMD's invention - that honour goes to some madmen in the 60s: "The first TSV was patented by William Shockley in 1962,[4] although most people in the electronics industry consider Merlin Smith and Emanuel Stern of IBM the inventors of TSV, based on their patent “Methods of Making Thru-Connections in Semiconductor Wafers” filed on December 28, 1964 and granted on September 26, 1967." IBM strikes yet again as being a leader in IC design.

Intel invented High-k Metal gate tech, and later on were the first to ship FinFETs. And SOI was more of an IBM thing, which AMD made good use of over the years.

AMD did not produce the first CPUs with copper interconnects. IBM and Motorola did in 1997.

AMD did not invent iGPUs - that honour goes to consoles I believe. Simple example off the top of my head is the PS2's Emotion engine after a die shrink from two chips to a single unified chip. For a more modern example, you have the OMAP2420 (used in the Nokia N95, for example) which has a more "integrated" integrated GPU. AMD only wins the x86+iGPU award, and that's pretty shitty as awards go.

So where did Intel innovate you ask? Well, everywhere really, and more often than not driving x86 forward more so than AMD has. The only really, really major technical wins AMD has are x86_64 and integrating the memory controller. Big wins, certainly, but nothing that Intel couldn't have done on their own.

Doesn't mean AMD can't compete, or even beat Intel, but overall, Intel has been the leader more often than not. That's just the facts, unfortunately.

On the subject of Itanium, you should really go talk to anyone who's worked with Itanium at a really low-level (like OS or compiler development). Itanium is an AMAZING microartitecture, let down completely by x86 lock-in, a crap initial release, crap x86 emulation on the first gen (which I have been told was a big part of why the initial release was crap, and later ditched completely), and being basically HP-only. These days, you can see much of the lessons learnt with Itanium in the core design of the Sandy Bridge lineage, in particular how they can achieve 4 FLOPs/cycle, and the usefulness of HyperThreading.

PS: you should really have checked that AMD actually invented/popularised most of the things you give AMD credit for, since x86 isn't the only CPU microarchitecture out there.