NVIDIA’s GRID Game Streaming Service Rolls Out 1080p60 Support

by Ryan Smith on May 12, 2015 5:50 PM EST- Posted in

- GPUs

- Shield

- NVIDIA

- GRID

- Cloud Gaming

Word comes from NVIDIA this afternoon that they are rolling out a beta update to their GRID game streaming service. Starting today, the service is adding 1080p60 streaming to its existing 720p60 streaming option, with the option initially going out to members of the SHIELD HUB beta group.

Today’s announcement from NVIDIA comes as the company is ramping up for the launch of the SHIELD Android TV and its accompanying commercial GRID service. The new SHIELD console is scheduled to ship this month, meanwhile the commercialization of the GRID service is expected to take place in June, with the current free GRID service for existing SHIELD portable/tablet users listed as running through June 30th. Given NVIDIA’s ambitions to begin charging for the service, it was only a matter of time until the company began offering the service, especially as the SHIELD Android TV will be hooked up to much larger screens where the limits of 720p would be more easily noticed.

In any case, from a technical perspective NVIDIA has long had the tools necessary to support 1080p streaming – NVIDIA’s video cards already support 1080p60 streaming to SHIELD devices via GameStream – so the big news here is that NVIDIA has finally flipped the switch with their servers and clients. Though given the fact that 1080p is 2.25x as many pixels as 720p, I’m curious whether part of this process has involved NVIDIA adding some faster GRID K520 cards (GK104) to their server clusters, as the lower-end GRID K340 cards (GK107) don’t offer quite the throughput or VRAM one traditionally needs for 1080p at 60fps.

But the truly difficult part of this rollout is on the bandwidth side. With SHIELD 720p streaming already requiring 5-10Mbps of bandwidth and NVIDIA opting for quality over efficiency on the 1080p service, the client bandwidth requirements for the 1080p service are enormous. 1080p GRID will require a 30Mbps connection, with NVIDIA recommending users have a 50Mbps connection to keep from any other network devices compromising the game stream. To put this in perspective, no video streaming service hits 30Mbps, and in fact Blu-Ray itself tops out at 48Mbps for audio + video. NVIDIA in turn needs to run at a fairly high bitrate to make up for the fact that they have to all of this encoding in real-time with low latency (as opposed to highly optimized offline encoding), hence the significant bandwidth requirement. Meanwhile 50Mbps+ service in North America is still fairly rare – these requirements all but limit it to cable and fiber customers – so at least for now only a limited number of people will have the means to take advantage of the higher resolution.

| NVIDIA GRID System Requirements | ||

| 720p60 | 1080p60 | |

| Minimum Bandwidth | 10Mbps | 30Mbps |

| Recommended Bandwidth | N/A | 50Mbps |

| Device | Any SHIELD, Native Or Console Mode | Any SHIELD, Console Mode Only (no 1080p60 to Tablet's screen) |

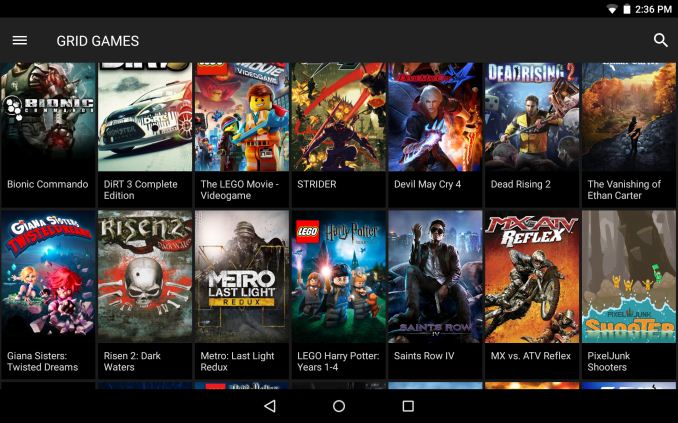

As for the games that support 1080p streaming, most, but not all GRID games support it at this time. NVIDIA’s announcement says that 35 games support 1080p, with this being out of a library of more than 50 games. Meanwhile I’m curious just what kind of graphics settings NVIDIA is using for some of these games. With NVIDIA’s top GRID card being the equivalent of an underclocked GTX 680, older games shouldn’t be an issue, but more cutting edge games almost certainly require tradeoffs to maintain framerates near 60fps. So I don’t imagine NVIDIA is able to run every last game with all of their settings turned up to maximum.

Finally, NVIDIA’s press release also notes that the company has brought additional datacenters online, again presumably in anticipation of the commercial service launch. A Southwest US datacenter is now available, and a datacenter in Central Europe is said to be available later this month. This brings NVIDIA’s total datacenter count up to six: USA Northwest, USA Southwest, USA East Coast, Northern Europe, Central Europe, and Asia Pacific.

Source: NVIDIA

61 Comments

View All Comments

yannigr2 - Wednesday, May 13, 2015 - link

You did NOT got it straight. Let me enlighten you.We guys have Nvidia GPUs AND AMD GPUs. On the box on our Nvidia GPUs it was saying "PhysX support, CUDA support". Nvidia is DENYING a feature that it is selling on the box because we did a huge mistake. We put the Nvidia GPU as secondary. In all Nvidia's arrogance having an AMD GPU as primary and an Nvidia GPU as secondary is a big insult. So as Nvidia customers we have to be punished, by removing support for PhysX and CUDA.

In case you start talking about compatibility and stability problems let me enlighten you some more. Nvidia did a mistake in the past and 258 beta driver, go out on public WITHOUT a lock. So they add the lock latter, not while building the driver. A couple of patches out there where giving the option to use a GeForce card as a PhysX card having an AMD GPU as primary. Never got a BSOD with that patch in Batman, Alice, Borderland, or other games. Even if there where problems Nvidia could offer that option with a big, huge pop up window in the face of the user saying that they can not guaranty any performance or stability problems with AMD cards. They don't. They LOCK IT away from their OWN customers, even while advertising as a feature on the box.

One last question. What part of the "USB monitor driver" didn't you understood?

ravyne - Wednesday, May 13, 2015 - link

What? "Cuda Cores" is just marketting speak for their Cuda-capable graphics micro-architectures, of which they have several -- Cuda isn't a hardware instruction set, its a high-level language. Cuda source code could be compiled for AMD's GPU microarchitectures just as easily, or even your CPU (it would just run a lot more slowly). Remember, nVidia didn't invent PhysX either -- that started off as a CPU library and PhysX's own physics accelerator cards.Einy0 - Wednesday, May 13, 2015 - link

That's not what they are saying at all. What they are saying is the nVidia disables CUDA and PhysX when ever there is another video driver functioning in the system. Theoretically you could get a Radeon 290X for the video chores and dedicate a lower end nVidia based card to processing PhysX. You can do this if you have two nVidia cards but not if you mix flavors. If you remember correctly PhysX existed way before CUDA. If nVidia wanted, they could make it an open standard and allow AMD, Intel, eccetera to create their own processing engine for Phsyx or even CUDA for that matter. They are using shader engines to do floating point calculations for things other than pixels, vertexes or geometry. Personally I got an nVidia card because my last card was AMD/ATI. I try to rotate brands every other generation or so if the value is similar. I personally haven't had any driver related issues with either vendor in the past 5+ years.rtho782 - Wednesday, May 13, 2015 - link

PhysX and CUDA work for me with a 2nd monitor on my IGP?yannigr2 - Wednesday, May 13, 2015 - link

If the IGP is not powering the primary screen I THINK yes. The primary monitor would have to be on the Nvidia card so your IGP will be used only to show a picture on the secondary monitor, nothing else.extide - Wednesday, May 13, 2015 - link

They make an exception for IGP's because otherwise Optimus wouldn't work at all.chizow - Wednesday, May 13, 2015 - link

Yep, Nvidia has said all along they don't want to support this, ultimately PhysX is their product and part of the Nvidia brand, so problems will reflect poorly upon them (its funny because obviously AMD does not feel this way re: FreeSync).This may change in the future with DX12 as Microsoft claims they are going to take on this burden within the API to address multiple vendor's hardware, but I guarantee you, first sign of trouble and Microsoft is going to run for the hills and leave the end-users to fend for themselves.

Einy0 - Wednesday, May 13, 2015 - link

No, MS will just take a year to patch the issue which will cause another issue that will later need to be patched and so on and so forth. If you had the displeasure of using DirectX in the early days you know exactly what I'm talking about. OpenGL was king for a long time for a reason...chizow - Thursday, May 14, 2015 - link

Uh, OpenGL was king because it was fast, and you had brilliant coders like Carmack and Sweeney pushing its limits at breakneck speed. And sure DX was a mess to start, but ultimately, it was the driving force that galvanized and unified the PC to create the strong platform it is today.chizow - Wednesday, May 13, 2015 - link

@yannigr2 - I know you're a proven AMD fanboy, but set that fact aside for a moment to see what you've said is not uniformly true. Nvidia dGPU works just fine with Intel IGP, for both CUDA and PhysX, so it is not a case of Nvidia disabling it for competitor hardware.It is simply a choice of what they choose to support, as they've said all along. Nvidia supports Optimus as their IGP and dGPU solution, so those configurations work just fine.