Micron M500DC (480GB & 800GB) Review

by Kristian Vättö on April 22, 2014 2:35 PM ESTEndurance Ratings: How They Are Calculated

One of the questions I quite often face is about the manufacturers' endurance ratings. Go back two or three years and nobody had any endurance limits in their client SSDs but every SSD released in the past year or so has an endurance limitation associated with it. Why did that happen? Let's open up the situation a bit.

A few years ago, many enterprises would just go and buy regular consumer SSDs and use them in their servers. Generally there is nothing wrong with that because there are scenarios where enterprises can get by with client-grade hardware, but the problem was that a share of the enterprises knew that the drives weren't durable enough for their needs. However, they also knew that if they wore out the drive before the warranty ran out, the manufacturer would have to replace it.

Obviously that wasn't very good business for the manufacturers because for one drive sold, more than one had to be given away for free. At the same time less customers were buying the more expensive, high profit enterprise drives. Without disrupting the client market by either increasing prices or reducing quality, the manufacturers decided to start including a maximum endurance rating, which would invalidate the warranty if exceeded.

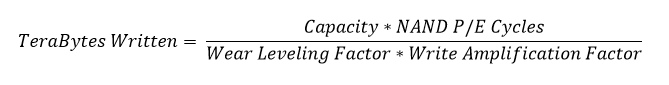

The equation for endurance is rather simple. All you need to take into account is the capacity of the drive, the P/E cycles of the NAND and the wear leveling and write amplification factors. When all that is put into an equation, it looks like this:

Notice that the correct term for TBW is TeraBytes Written, not TotalBytes Written although both are fairly widely used. The hardest part in calculating the TBW is figuring out the wear leveling and write amplification factors because these are workload depedent. Hence manufacturers often use a worst case 4KB random write scenario to come up with the TBW figure as this ensures that the end-user cannot have a more demanding workload with higher write amplification.

For the uninitiated, the wear leveling factor (WLF) in this context means the maximum stress that the wear leveling method would put onto the most heavily cycled block compared to the average number of cycles. A factor of two would mean that the most heavily cycled block would have twice the number of cycles compared to the average. Write amplification factor (WAF), on the other hand, refers to the ratio of host and NAND writes. A factor of two would in this case mean that for every megabyte that the host writes, two megabytes are written to the NAND. These two factors go hand in hand in the sense that a small WLF results in higher WAF because the drive will do more internal reorganization operations to cycle all blocks equally, which consumes NAND writes.

The interesting part about TBWs is that they actually give us a way to estimate the combined wear leveling and write amplification factor of the drive. In the case of 120GB M500DC, that would be a surprising 0.72x. Obviously you can go lower than 1x without using some form of compression but the 120GB M500DC actually has 192GiB of NAND onboard that extends the endurance. If we used that figure to calculate the combined WLF and WAF, it would be 1.24x, which is much more reasonable. For some reason the JEDEC spec defines the capacity as the usable capacity even for endurance calculations but in the end it doesn't matter what figure you change as they are all related to each other (e.g. with 120GB used as the capacity, the P/E cycles could be higher than 3,000 because the over-provisioned NAND adds cycles).

Ultimately none of the manufacturers are willing to disclose the exact details of how they calculate their endurance ratings but at the high-level this is how it's done according to JEDEC's standards. Furthermore, I wouldn't rule out the possibility that some OEMs artificially lower the ratings for their consumer drives just to make sure they are not used by enterprises. In the end, there isn't really a way for us to find out whether the TBW is accurate or not since the efficiency factors are not easily measurable by third parties like us.

37 Comments

View All Comments

abufrejoval - Monday, April 28, 2014 - link

I'm seen an opportunity here to clarify something that I've always wondered about:How exactly does this long time retention work for FLASH?

In the old days, when you had an SSD, you weren't very likely having it lie around, after you paid an arm and a leg for it.

These days, however, storing your most valuable data on an SSD almost seems logical, because one of my nightmares is dropping that very last backup magnetic drive, just when I'm trying to insert it after a complete loss of my primary active copy: SSD just seems so much more reliable!

And then there comes this retention figure...

So what happens when I re-insert an SSD, that has been lying around say for 9 months with those most valuable baby pics of your grown up children?

Does just powering it up mean all those flash cells with logical 1's in them will magically draw in charge like some sort of electron sponge?

Or will the drive have to go through a complete read-check/overwrite cycle depending on how near blocks have come to the electron depletion limit?

How would it know the time delta? How would I know it's finished the refresh and it's safe to put it away for another 9 months?

I have some older FusionIO 320GB MLC drives in the cupboard, that haven't been powered up for more than a year: Can I expect them to look blank?

P.S. Yes, you need an edit button and a resizable box for text entry!

Kristian Vättö - Tuesday, April 29, 2014 - link

The way NAND flash works is that electrons are injected to what is called a floating gate, which is insulated from the other parts of the transistor. As it is insulated, the electrons can't escape the floating gate and thus SSDs are able to hold the data. However, as the SSD is written to, the insulating layer will wear out, which decreases its ability to insulate the floating gate (i.e. make sure the electrons don't escape). That causes the decrease in data retention time.Figuring out the exact data retention time isn't really possible. At the maximum endurance, it should be 1 year for client drives and 3 months for enterprise drives but anything before and after is subject to several variables that the end-user don't have access to.

Solid State Brain - Tuesday, April 29, 2014 - link

Data retention depends mainly on NAND wear. It's the highest (several years - I've read 10+ years even for TLC memory though) at 0 P/E cycles and decreases with usage. By JEDEC specifications, consumer SSDs are to be considered at "end life" when the minimum retention time drops below 1 year, and that's what you should expect when reaching the P/E "limit" (which is not actually a hard limit, just a threshold based on those JEDEC-spec requirements). For enterprise drives it's 3 months. Storage temperature will also affect retention. If you store your drives in a cool place when unpowered, their retention time will be longer. By JEDEC specifications the 1 year time for consumer drives is at 30C, while the 3 months time for enterprise one is at 40C. Tidbit: manufacturers use to bake NAND memory in low temperature ovens to simulate high wear usage scenarios during tests.To be refreshed, data has to be reprogrammed again. Just powering up an SSD is not going to reset the retention time for the existing data, it's only going to make it temporarily slow down.

When powered, the SSD's internal controller keeps track of when writes occurred and reprograms old blocks as needed to make sure that data retention is maintained and consistent across all data. This is part of the wear leveling process, which usually is pretty efficient in keeping block usage consistent. However, I speculate this can happen only to a certain extent/rate. A worn drive left unpowered for a long time should preferably have its data dumped somewhere and then cloned back, to be sure that all NAND blocks have been refreshed and that their retention time has been reset to what their wear status allow.

hojnikb - Wednesday, April 23, 2014 - link

TLC is far from crap (well quality one that is). And no, TLC does not have issues holding a "charge". Jedec states a minimum of 1 year of data retention, so your statement is complete bullshit.apudapus - Wednesday, April 23, 2014 - link

TLC does have issues but the issues can be mitigated. A drive made up of TLC NAND requires much stronger ECC compared to MLC and SLC.Notmyusualid - Tuesday, April 22, 2014 - link

My SLC X25-E 64GB is still chugging along, with not so much as a hiccup.It n e v e r slows down, it 'felt' fast constantly, not matter what is going on.

In about that time I've had one failed OCZ 128GB disk (early Indullix I think), one failed Kingston V100, one failed Corsair 100GB too (model forgotten), a 160GB X25-M arrived DOA (but it's replacement is still going strong in a workstation), and late last year a failed Patriot Wildfire 240GB.

The two 840 Evo 250GB disks I have (TLC) are absolute garbage. So bad I had to remove them from the RAID0, and run them individually. When you want to over-write all the free space - you'd better have some time on your hands.

SLC for the win.

Solid State Brain - Wednesday, April 23, 2014 - link

The X25-E 64 GB actually has 80 GiB of NAND memory on its PCB. Since of these only 64 GB (-> 59.6 GiB) are available to the user, it means that about 25% of it is overprovisining area. The drive is obviously going to excel in performance consistency (at least for its time).On the other hand, the 840 250 GB EVO has less OP than the previous 840 models with TLC memory, as you have to subtract 9 GiB from the 23.17 GiB amount of unavailable space (256 GiB of physically installed NAND - 250 GB->232.83 GiB of user space) previously fully used as overprovisioning area, for the Turbowrite feature. This means that in trim-less or intensive write environments with little or no free space they're not going to be that great in performance consistency.

If you were going to use The Samsung 840 EVOs in a RAID-0 configuration you should really had at the very least to increase the OP area by setting up trimmed, unallocated space. So, it's not really that they are "absolute garbage" (as they obviously they aren't) and it's really inherently due to the TLC memory. It's your fault in that you most likely didn't take the necessary steps to use them properly with your RAID configuration.

Solid State Brain - Wednesday, April 23, 2014 - link

I meant:*...and it's NOT really inherently due to the...

TheWrongChristian - Friday, April 25, 2014 - link

> When you want to over-write all the free space - you'd better have some time on your hands.Why would you overwrite all the free space? Can't you TRIM the drives?

Any why run them in RAID0? Can't you use them as JBOD, and combine volumes?

SLC versus TLC results in a about a factor of 4 cheaper just based on a die area basis. That's why drives are MLC and TLC based, the extra storage being used to add extra spare area to make the drive more economical over the drives useful life. Your SLC x25-e, on the other hand, will probably never ever reach it's P/E limit before you discard it for a more useful, faster, bigger replacement drive. We'll probably have practical memrister based drives before the x25-e uses all it's P/E cycles.

zodiacsoulmate - Tuesday, April 22, 2014 - link

It make me think about my OCZ vector 256GB, it breaks everytime there is power lose, even hard reset...There are quite a lot people claim this problem online, and Vector 256GB became only sale refurbised before any other vector drive....

I RMAed two of them, and OCZ replaced mine with Vector 150, which seems fine now.. maybe we should add power lost test to SSDs...