Some Quick Gaming Numbers at 4K, Max Settings

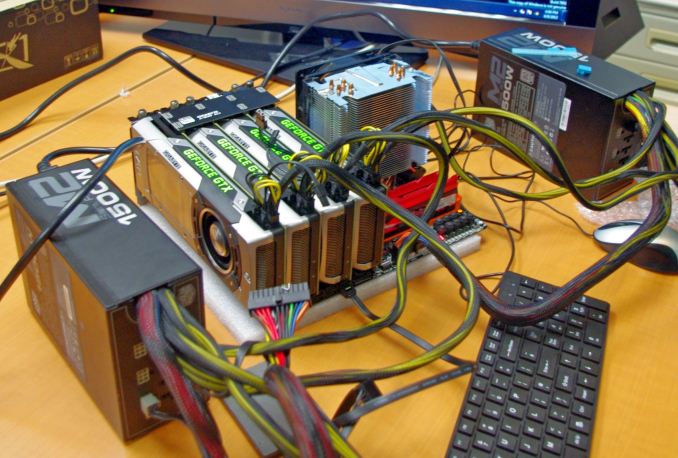

by Ian Cutress on July 1, 2013 8:00 AM ESTPart of my extra-curricular testing post Computex this year put me in the hands of a Sharp 4K30 monitor for three days and with a variety of AMD and NVIDIA GPUs on an overclocked Haswell system. With my test-bed SSD at hand and limited time, I was able to test my normal motherboard gaming benchmark suite at this crazy resolution (3840x2160) for several GPU combinations. Many thanks to GIGABYTE for this brief but eye-opening opportunity.

The test setup is as follows:

Intel Core i7-4770K @ 4.2 GHz, High Performance Mode

Corsair Vengeance Pro 2x8GB DDR3-2800 11-14-14

GIGABYTE Z87X-OC Force (PLX 8747 enabled)

2x GIGABYTE 1200W PSU

Windows 7 64-bit SP1

Drivers: GeForce 320.18 WHQL / Catalyst 13.6 Beta

GPUs:

| NVIDIA | ||||||

|---|---|---|---|---|---|---|

| GPU | Model | Cores / SPs | MHz | Memory Size | MHz | Memory Bus |

| GTX Titan | GV-NTITAN-6GD-B | 2688 | 837 | 6 GB | 1500 | 384-bit |

| GTX 690 | GV-N690D5-4GD-B | 2x1536 | 915 | 2 x 2GB | 1500 | 2x256-bit |

| GTX 680 | GV-N680D5-2GD-B | 1536 | 1006 | 2 GB | 1500 | 256-bit |

| GTX 660 Ti | GV-N66TOC-2GD | 1344 | 1032 | 2 GB | 1500 | 192-bit |

| AMD | ||||||

| GPU | Model | Cores / SPs | MHz | Memory Size | MHz | Memory Bus |

| HD 7990 | GV-R799D5-6GD-B | 2x2048 | 950 | 2 x 3GB | 1500 | 2x384-bit |

| HD 7950 | GV-R795WF3-3GD | 1792 | 900 | 3GB | 1250 | 384-bit |

| HD 7790 | GV-R779OC-2GD | 896 | 1075 | 2GB | 1500 | 128-bit |

For some of these GPUs we had several of the same model at hand to test. As a result, we tested from one GTX Titan to four, 1x GTX 690, 1x and 2x GTX 680, 1x 660Ti, 1x 7990, 1x and 3x 7950, and 1x 7790. There were several more groups of GPUs available, but alas we did not have time. Also for the time being we are not doing any GPU analysis on many multi-AMD setups, which we know can have issues – as I have not got to grips with FCAT personally I thought it would be more beneficial to run numbers over learning new testing procedures.

Games:

As I only had my motherboard gaming tests available and little time to download fresh ones (you would be surprised at how slow in general Taiwan internet can be, especially during working hours), we have a standard array of Metro 2033, Dirt 3 and Sleeping Dogs. Each one was run at 3840x2160 and maximum settings in our standard Gaming CPU procedures (maximum settings as the benchmark GUI allows).

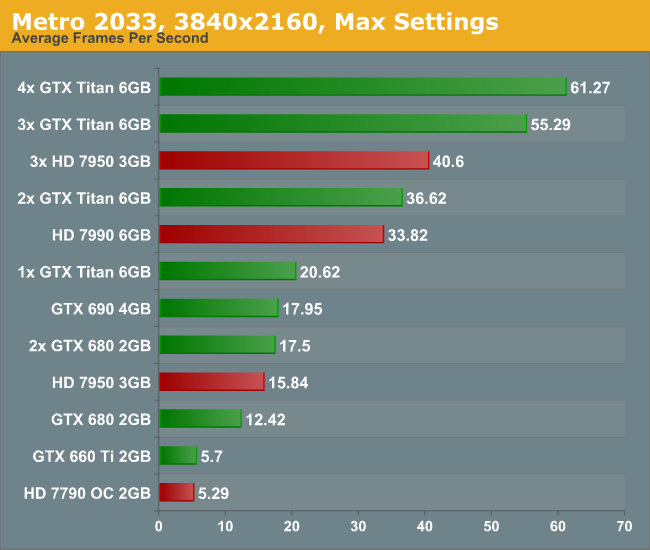

Metro 2033, Max Settings, 3840x2160:

Straight off the bat is a bit of a shocker – to get 60 FPS we need FOUR Titans. Three 7950s performed at 40 FPS, though there was plenty of microstutter visible during the run. For both the low end cards, the 7790 and 660 Ti, the full quality textures did not seem to load properly.

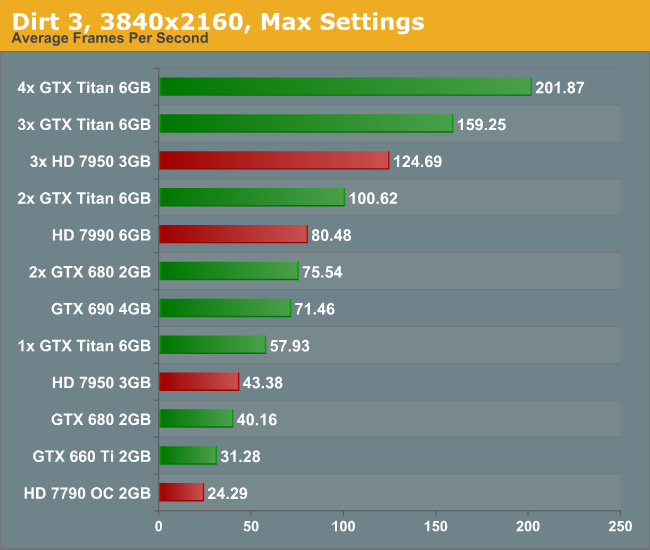

Dirt 3, Max Settings, 3840x2160:

Dirt is a title that loves MHz and GPU power, and due to the engine is quite happy to run around 60 FPS on a single Titan. Understandably this means that for almost every other card you need at least two GPUs to hit this number, more so if you have the opportunity to run 4K in 3D.

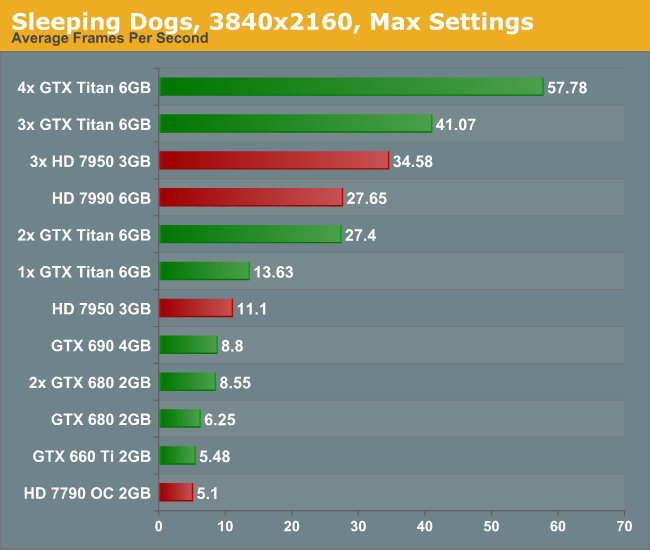

Sleeping Dogs, Max Settings, 3840x2160:

Similarly to Metro, Sleeping Dogs (with full SSAA) can bring graphics cards down to their knees. Interestingly during the benchmark some of the scenes that ran well were counterbalanced by the indoor manor scene which could run slower than 2 FPS on the more mid-range cards. In order to feel a full 60 FPS average with max SSAA, we are looking at a quad-SLI setup with GTX Titans.

Conclusion:

First of all, the minute you experience 4K with appropriate content it is worth a long double take. With a native 4K screen and a decent frame rate, it looks stunning. Although you have to sit further back to take it all in, it is fun to get up close and see just how good the image can be. The only downside with my testing (apart from some of the low frame rates) is when the realisation that you are at 30 Hz kicks in. The visual tearing of Dirt3 during high speed parts was hard to miss.

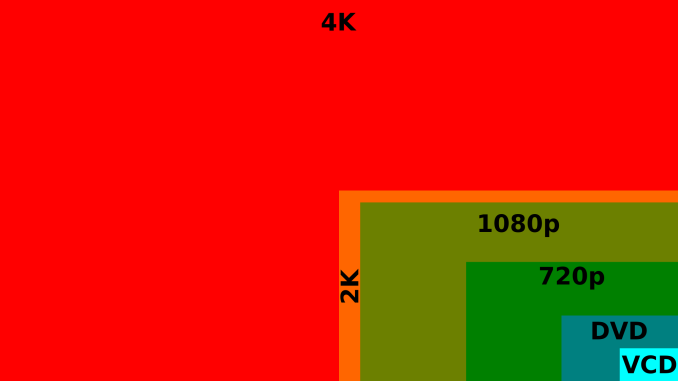

But the newer the game, and the more elaborate you wish to be with the advanced settings, then 4K is going to require horsepower and plenty of it. Once 4K monitors hit a nice price point for 60 Hz panels (sub $1500), the gamers that like to splash out on their graphics cards will start jumping on the 4K screens. I mention 60 Hz because the 30 Hz panel we were able to test on looked fairly poor in the high FPS Dirt3 scenarios, with clear tearing on the ground as the car raced through the scene. Currently users in North America can get the Seiki 50” 4K30 monitor for around $1500, and they recently announced a 39” 4K30 monitor for around $700. ASUS are releasing their 4K60 31.5” monitor later this year for around $3800 which might bring about the start of the resolution revolution, at least for the high-end prosumer space.

All I want to predict at this point is that driving screen resolutions up will have to cause a sharp increase in graphics card performance, as well as multi-card driver compatibility. No matter the resolution, enthusiasts will want to run their games with all the eye candy, even if it takes three or four GTX Titans to get there. For the rest of us right now on our one or two mid-to-high end GPUs, we might have to wait 2-3 years for the prices of the monitors to come down and the power of mid-range GPUs to go up. These are exciting times, and we have not even touched what might happen in multiplayer. The next question is the console placement – gaming at 4K would be severely restrictive when using the equivalent of a single 7850 on a Jaguar core, even if it does have a high memory bandwidth. Roll on Playstation 5 and Xbox Two (Four?), when 4K TVs in the home might actually be a thing by 2023.

16:9 4K Comparison image from Wikipedia

134 Comments

View All Comments

r3loaded - Monday, July 1, 2013 - link

That's what I was wondering too. At such a high resolution and a high enough pixel pitch, any form of anti-aliasing is just pointless. 2x SSAA is practically equivalent to running the cards at 8K, a resolution which is still a decade or two away from being seen outside the lab.nathanddrews - Monday, July 1, 2013 - link

While we might have to wait a little longer in the USA, NHK Japan is on track to begin 8K (Super Hi-Vision) satellite broadcasts in 2016. The codec and compression methods have been finalized, commercial equipment is being prepared for launch... 8K will be here sooner than you think. I never would have thought we'd have 4K TV/monitors for $699 already, but we do.I've said it before, but GPU makers really need to get on the ball quick. Hopefully mainstream Volta and Pirates will be up to the task...

Vi0cT - Monday, July 1, 2013 - link

Error, in 2016 Japan will start the trails for 8K but it's not going to be rolled out until 2020 (If they get the Olympics). You can read the official paper from the Ministry of Internal Affairs and CommunicationsVi0cT - Monday, July 1, 2013 - link

Add: The last comment may come out as hostile but there was no hostility intended :), so if you misunderstood it please excuse me.nathanddrews - Monday, July 1, 2013 - link

It's cool, I'm not butthurt.Vi0cT - Monday, July 1, 2013 - link

By 2020 Japan will start 8K Broadcast, and 4K Broadcast will start next year so it's not that far.mapesdhs - Tuesday, July 2, 2013 - link

"... outside the lab"; not so, many movie studios are already editing in 8K, and some are

preparing the way to cope with 16K material.

Ian.

nathanddrews - Wednesday, July 3, 2013 - link

I don't believe anyone is *editing* in 8K.Most movies shot on film (35/70MM) pre-2000 are scanned at 2K or 4K (a select few have been scanned at 8K), then sampled down to 1080p for Blu-ray with some to 4K for the new 4K formats rolling out right now. Those movies were edited on film using traditional methods. Any CGI was composited on the film. Those movies have an advantage of always being future proof as one can always scan at a higher resolution to get more fidelity. 35MM tops out at about 8K in terms of grain "resolution", while 65/70MM is good for 16K. Results vary by film stock and quality of elements.

Most movies shot on film after the DI (digital intermediate) became mainstream had ALL of their editing (final color grading, contrast, CGI, etc.) completed in a 2K environment no matter what resolution the film was scanned. "O Brother Where Art Thou" and the "Lord of the Rings" trilogy are examples of films that will never be higher native resolution than 2K. The same goes for digitally shot movies using the RED cameras. Even thought they shot at 4K or 5K, the final editing and mastering was a 2K DI. "Attack of the Clones" was shot entirely digital 1080p and will never look better than it does on Blu-ray. Only in the past couple years has a 4K DI become feasible for production.

10 years from now, the only movies that will fully realize 8K will be older movies shot and edited entirely on film or movies shot and edited in 8K digital. We'll have this gap of films between about 1998 and 2016 that will all be forever stuck at 1080p, 2K, or 4K.

purerice - Sunday, July 21, 2013 - link

nathanddrews, thank you for your comment. I asked a relative who helps design movie theater projectors about that very issue. The answer is the industry has no answer, which you confirm. Most of the early digital films will depend on upscaling. On the plus side, in 20 years we should be ready for another LOTR remake anyway :)Streetwind - Monday, July 1, 2013 - link

That was the same thought that occurred to me as well. I'd love to see how the results would have been without antialiasing. As it stands I barely use it on my 1200p monitor (though admittedly a big reason for that is because of the glorified fullscreen blur filter they generally try to sell you as "antialiasing" nowadays).Too bad Ian didn't have more time to test.