A Look At Triple-GPU Performance And Multi-GPU Scaling, Part 1

by Ryan Smith on April 3, 2011 7:00 AM ESTIt’s been quite a while since we’ve looked at triple-GPU CrossFire and SLI performance – or for that matter looking at GPU scaling in-depth. While NVIDIA in particular likes to promote multi-GPU configurations as a price-practical upgrade path, such configurations are still almost always the domain of the high-end gamer. At $700 we have the recently launched GeForce GTX 590 and Radeon HD 6990, dual-GPU cards whose existence is hedged on how well games will scale across multiple GPUs. Beyond that we move into the truly exotic: triple-GPU configurations using three single-GPU cards, and quad-GPU configurations using a pair of the aforementioned dual-GPU cards. If you have the money, NVIDIA and AMD will gladly sell you upwards of $1500 in video cards to maximize your gaming performance.

These days multi-GPU scaling is a given – at least to some extent. Below the price of a single high-end card our recommendation is always going to be to get a bigger card before you get more cards, as multi-GPU scaling is rarely perfect and with equally cutting-edge games there’s often a lag between a game’s release and when a driver profile is released to enable multi-GPU scaling. Once we’re looking at the Radeon HD 6900 series or GF110-based GeForce GTX 500 series though, going faster is no longer an option, and thus we have to look at going wider.

Today we’re going to be looking at the state of GPU scaling for dual-GPU and triple-GPU configurations. While we accept that multi-GPU scaling will rarely (if ever) hit 100%, just how much performance are you getting out of that 2nd or 3rd GPU versus how much money you’ve put into it? That’s the question we’re going to try to answer today.

From the perspective of a GPU review, we find ourselves in an interesting situation in the high-end market right now. AMD and NVIDIA just finished their major pushes for this high-end generation, but the CPU market is not in sync. In January Intel launched their next-generation Sandy Bridge architecture, but unlike the past launches of Nehalem and Conroe, the high-end market has been initially passed over. For $330 we can get a Core i7 2600K and crank it up to 4GHz or more, but what we get to pair it with is lacking.

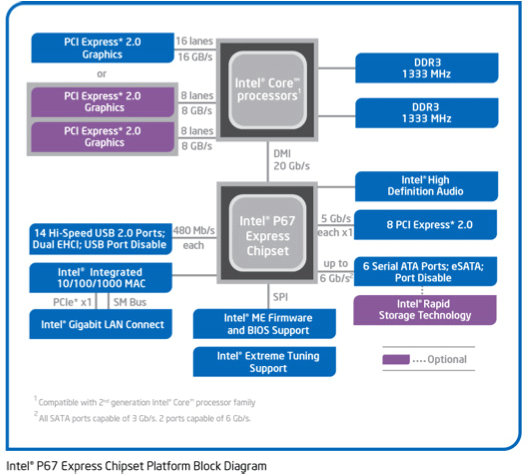

Sandy Bridge only supports a single PCIe x16 link coming from the CPU – an awesome CPU is being held back by a limited amount of off-chip connectivity; DMI and a single PCIe x16 link. For two GPUs we can split that out to x8 and x8 which shouldn’t be too bad. But what about three GPUs? With PCIe bridges we can mitigate the issue some by allowing the GPUs to talk to each other at x16 speeds and dynamically allocate CPU-to-GPU bandwidth based on need, but at the end of the day we’re splitting a single x16 lane across three GPUs.

The alternative is to take a step back and work with Nehalem and the x58 chipset. Here we have 32 PCIe lanes to work with, doubling the amount of CPU-to-GPU bandwidth, but the tradeoff is the CPU. Gulftown and Nehalm are capable chips on its own, but per-clock the Nehalem architecture is normally slower than Sandy Bridge, and neither chip can clock quite as high on average. Gulftown does offer more cores – 6 versus 4 – but very few games are held back by the number of cores. Instead the ideal configuration is to maximize performance of a few cores.

Later this year Sandy Bridge E will correct this by offering a Sandy Bridge platform with more memory channels, more PCIe lanes, and more cores; the best of both worlds. Until then it comes down to choosing from one of two platforms: a faster CPU or more PCIe bandwidth. For dual-GPU configurations this should be an easy choice, but for triple-GPU configurations it’s not quite as clear cut. For now we’re going to be looking at the latter by testing on our trusty Nehalem + x58 testbed, which largely eliminates a bandwidth bottleneck in a tradeoff for a CPU bottleneck.

Moving on, today we’ll be looking at multi-GPU performance under dual-GPU and triple-GPU configurations; quad-GPU will have to wait. Normally we only have two reference-style cards of any product on hand, so we’d like to thank Zotac and PowerColor for providing a reference-style GTX 580 and Radeon HD 6970 respectively.

97 Comments

View All Comments

Sabresiberian - Tuesday, April 5, 2011 - link

I've been thinking for quite awhile that we need something different, and this is the primary reason why - I can't get all I want to install on any ATX mainboard I know of.;)

Sabresiberian - Tuesday, April 5, 2011 - link

I've always thought minimum frame rate is where the focus should be in graphics card tests (when looking at the frame rate performance aspect), instead of the average. It's the minimum frame rate that bothers people or even makes a game unplayable.Thanks!

;)

mapesdhs - Wednesday, April 6, 2011 - link

I hate to say it but with the CPU at only 3.33, the results don't really mean that much. I know

the 920 used can't go higher, but it just seems a bit pointless to do all these tests when the

results can't really be used as the basis for making a purchasing decision because of a very

probably CPU bottleneck. Surely it would have been sensible for an article like this to replace

the 920 with a 950 and redo the oc to 4+. The 950 is good value now aswell. Or even the

entry 6-core.

Re slot spacing, perhaps if one insists on using P67 it can be hard to sort that out, but there

*are* X58 boards which provide what one needs, eg. the Asrock X58 Extreme6 does have

double-slot spacing between each PCIe slot, so 3 dual-slot cards would have a fully empty

slot between each card for better cooling. Do other vendors make a board like this? I couldn't

find one after a quick check on the Gigabyte or ASUS sites. Only down side is with all 3 slots

used the Extreme6 operates slots 2 and 3 at 8x/8x; for many games this isn't an issue (depends

on the game), but I'm sure some would moan nonetheless.

Would be interesting to know how that would compare though, ie. a 4GHz 950 on an Extreme6

for these tests.

Unless I missed it somehow, I'm a tad surprised Gigabyte don't make an X58 board with this type

of slot spacing, or do they?

Ian.

xAlex79 - Thursday, April 14, 2011 - link

I am a bit disapointed Ryan in the way you put your conclusions.At the start of the article you highlight how you are going to look at Trifire and Tri-Sli and compare how it does for the value.

Yet at the end in your conclusion there isnt a single mention or even adjusted scores considering value at all. And that makes Nvidia look alot better than they should. It is as you completely forget that three 580s costs you 1500$ and that three 6970s costs you 900$.

Based on that and the fact YOU stated you would take value into account (And personally I think posting any kind of review without value nowdays is just irresponsible and biased) I am very disapointed with an otherwise very good set of tests.

I also understand that this is labeled "Part 1" and that the value might come into "Part 2" but you should have CLEARLY outlined that in your conclusion were that the case. And given the quality of reviews that we have come to expect from Anantech, the final numbers should ALWAYS include a value perspective.

I will jsut outline that it is poor form and not very professional and that in the end the people you should care about are us, your readers. Not how you look or try to look for hardware manifacturers. If this was a mistake, you should correct it asap. It does not make you look good.

L1qu1d - Friday, April 15, 2011 - link

I wonder why they didn't opt for the 270.51 Drivers and went with 3 month old drivers?Compared to the tested drivers:

GeForce GTX 580:

Up to 516% in Dragon Age 2 (SLI 2560x1600 8xAA/16xAF Very High, SSAO on)

Up to 326% in Dragon Age 2 (1920x1200 8xAA/16xAF Very High, SSAO on)

Up to 11% in Just Cause 2 (1920x1200 8xAA/16xAF, Concrete Jungle)

Up to 11% in Just Cause 2 (SLI 2560x1600 8xAA/16xAF, Concrete Jungle)

Up to 7% in Civilization V (1920x1200 4xAA/16xAF, Max settings)

Up to 6% in Far Cry 2 (SLI 2560x1600 8xAA/16xAF, Max settings)

Up to 5% in Civilization V (SLI 1920x1200 8xAA/16xAF, Max settings)

Up to 5% in Left 4 Dead 2 (1920x1200 noAA/AF, Outdoor)

Up to 5% in Left 4 Dead 2 (SLI 2560x1600 4xAA/16xAF, Outdoor)

Up to 4% in H.A.W.X. 2 (SLI 1920x1200 8xAA/16xAF, Max settings)

Up to 4% in Mafia 2 (SLI 2560x1600 AA on/16xAF, PhysX = High)

Fony - Thursday, April 28, 2011 - link

taking forever for the Eyefinity/Surround testing.vipergod2000 - Thursday, May 5, 2011 - link

The one thing that erks me is that the i7-920 OCed to ~3.3Ghz - causing the scaling of 3 cards being greatly reduced as opposed to other forum users that have 3 or 4 cards in CFX or SLI but with fantastic scaling - but assured with a coupling a i7-2600k at 5ghz minimum or a 980x/990x at 4.6ghz+