Intel Plans on Bringing Atom to Servers in 2012, 20W SNB Xeons in 2011

by Anand Lal Shimpi on March 15, 2011 2:35 PM EST- Posted in

- IT Computing

- CPUs

- Intel

- Atom

- Xeon

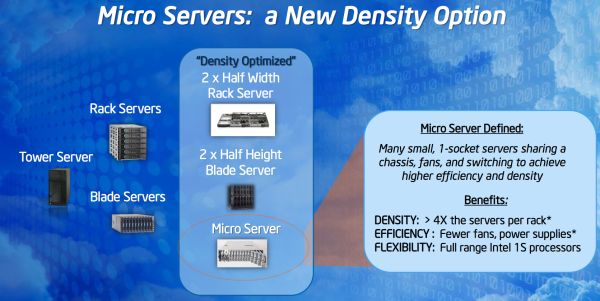

The transition to smaller form factors hasn't been exclusively a client trend over the past several years, we've seen a similar move in servers. The motiviation is very different however. In the client space it's about portability, in the datacenter it's about density. While faster multi-core CPUs have allowed the two-socket 1U server to really take off, they have also paved the way for a new category of density optimized servers: the micro server.

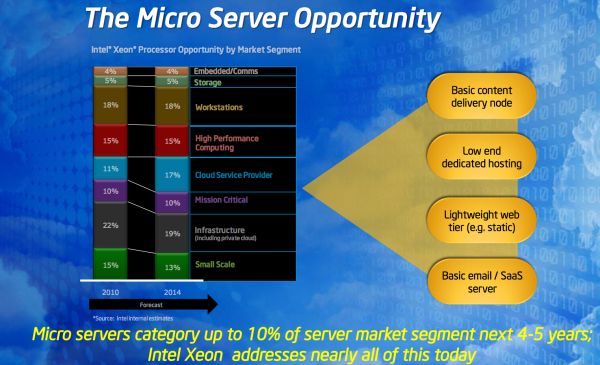

The argument for micro servers is similar to that for ultra low power clients. Only a certain portion of workloads really require high-end multi-socket servers, the rest spend much of their time idle and thus are better addressed by lower power, higher density servers. Johan typically argues that rather than tackling the problem with micro servers it's a better idea to simply increase your consolidation ratio into fewer, larger servers. There are obviously proponents on both sides of the fence but Intel estimates that the total market for micro servers will reach about 10% of its total shipments over the next 4 - 5 years. It's a small enough market for Intel not to be super concerned about but large enough that it needs to be properly addressed.

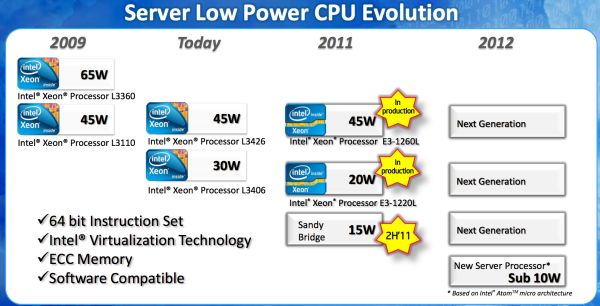

Today Intel believes that it addresses this market relatively well with the existing Xeon lineup. Below is a table of Sandy Bridge Xeons including a 45W and 20W part, these two being directed primarily at the micro server market:

| Intel SNB Xeon Lineup | ||||||||

| Intel Xeon Processor Number | Cores / Threads | Clock Speed | Single Core Max Turbo | L3 Cache | Memory Support (Channels / DIMMs / Max Capacity) | Power (TDP) | ||

| E3-1280 | 4 / 8 | 3.50GHz | 3.90GHz | 8MB | 2 / 4 / 32GB | 95W | ||

| E3-1270 | 4 / 8 | 3.40GHz | 3.80GHz | 8MB | 2 / 4 / 32GB | 80W | ||

| E3-1260L | 4 / 8 | 2.40GHz | 3.30GHz | 8MB | 2 / 4 / 32GB | 45W | ||

| E3-1240 | 4 / 8 | 3.30GHz | 3.70GHz | 8MB | 2 / 4 / 32GB | 80W | ||

| E3-1230 | 4 / 8 | 3.20GHz | 3.60GHz | 8MB | 2 / 4 / 32GB | 80W | ||

| E3-1220L | 2 / 4 | 2.20GHz | 3.40GHz | 3MB | 2 / 4 / 32GB | 20W | ||

| E3-1220 | 4 / 4 | 3.10GHz | 3.40GHz | 8MB | 2 / 4 / 32GB | 80W | ||

Drop clock speed (and voltage) low enough and you can hit the lower TDPs necessary to fit into a micro server. Thermal constraints are present since you're often cramming a dozen of these servers into a very small area.

Long term there is a bigger strategy issue that has to be addressed. ARM has been talking about moving up the pyramid and eventually tackling the low end/low power server market with its architectures. While Xeon can scale down, it can't scale down to the single digit TDPs without serious performance consequences. Remember the old rule of thumb: a single microprocessor architecture can only address an order of magnitude of TDPs. Sandy Bridge can handle the 15 - 150W space, but get too much below 15W and it becomes a suboptimal choice for power/performance.

The solution? Introduce a server CPU based on Intel's Atom architecture. And this is the bigger part of the announcement today. Starting in 2012 Intel will have an Atom based low power server CPU with sub-10W TDPs designed for this market. Make no mistake, this move is designed to combat what ARM is planning. And unlike the ultra mobile space, Intel has an ISA advantage in the enterprise market. It'll be tougher for ARM to move up than it will be for Intel to move down.

Intel's slide above seems to imply that we'll have ECC support with this server version of Atom in 2012, which is something current Atom based servers lack.

The only real question that remains is what Atom architecture will be used? We'll see an updated 32nm Atom by the end of 2011 but that's still fundamentally using the same Bonnell core that was introduced back in 2008. Intel originally committed to keeping with its in-order architecture for 5 years back in 2008, that would mean that 2012 is ripe for the introduction of an out-of-order Atom. Whether or not that updated core will make it in time for use in Atom servers is still up for debate.

53 Comments

View All Comments

duploxxx - Wednesday, March 16, 2011 - link

duh, it also has a GPU remember?Atom in current design with inorder architecture is useless, this is already shown in many benchmarks that Brazos is a much better chip for that. Unless off course Intel totally redesigns the atom

marc1000 - Tuesday, March 15, 2011 - link

I don't believe extreme low-power server will make enough success.... it is way better to have only 1 or 2 "medium" servers and virtualize small server inside those boxes.think about replacing 1 CPU or memory in a really high density server... the current blade servers are already crammed enough.

Johnmcl7 - Tuesday, March 15, 2011 - link

That was my initial thought but there are other uses for servers that I think microservers would work well, one example I've seen is within manufacturing equipment. In my experience there seems to be increasing use of PC equipment (rather than dedicated PLC equipment) and servers are chosen for uptime/reliability. However frequently these servers are overpowered so a system using a low power processor in a server system could be appealing.John

Sam125 - Tuesday, March 15, 2011 - link

That makes sense, but going with ultra dense micro servers seems overkill for equipment that doesn't require much computing power to begin with. Of course I would be a fool to doubt Intel's ability to size up a market, but I'm having a hard time picturing where an ultra dense microserver makes a better choice than what's already out on the market.mino - Tuesday, March 15, 2011 - link

For certain markets, isolation is key. And the cheapest way to achieve _undisputable-by-every-second-idiot_ isolation is by going the microserver way.L. - Wednesday, March 16, 2011 - link

I doubt that will have any impact on "every-second-idiot", as managing many micro-servers will be done using a micro-server management tool, with which you'll be able to do as many big mistakes as with a virtualization tool (or almost).Dense microservers are useless.

In the sense that, if you find it funny to put 2 cores on a micro-die, it is still way more interesting to put 36 cores on a normal sized die, with all the benefits and cost cutting that implies (shared mem controller etc.).

Also virtualization has brought a lot of manageability and I don't see that becoming useless because there is another power efficiency point of interest in "micro-servers".

Besides, any "spike" load on those atoms and you'll see how useless they can be.

Really, I can see 36 low-power cores on a die, but 2 low-power cores on a die IN a datacenter ? what for ... if you're in a datacenter you need 36+ of them anyway and you'll definitely prefer the manageability increase and the lower power requirement of a single die 36 core toy over 18* the even lower power req. of those dual core mini-cpus.

Also one must realize that splitting one 36 core box (assuming somebody sells a 36 low-power core die, which would NOT have more computing power than the current multi-core AMD cpu and would surely not use more power) in 18 2 core boxes means you have 18 power supplies to plug, 18 damn network cables adding to the spaghetti dish of the day, etc.

Not even talking about RAM yet, because if you're going to put something that's equal to 1/18th of a real server CPU in it's own box, it would be only right to give it like 1 Gig of RAM ...

Management costs up, maintenance costs up, complexity up, price up ... yes you get hardware-level isolation and potentially extremely cheap fully redundant solutions (like 2 4-socket atom boards in a 1U chassis with integrated load-balancing woo ..) but that still does not make any sense to anyone who uses a datacenter quarter rack or more.

Obviously some people will buy these, because Intel said so, but I think it's clear atom-like C/A PU's are just good for low-power stuff, like home file server, and the stuff mfeller2 mentions just down here. - And for the poor who need full h/w redundancy or those who don't like virtualization's manageability (and the every-second-idiot effect).

casket - Wednesday, March 16, 2011 - link

I completely agree. Microservers are crap. If you have smaller cores that use less power, like an atom... you can stick lots of them together. There is no need for a deep pipeline... if you have 100 cores. 36 cores would be great... but we're going to have to wait a little bit. 16-core atoms is coming, though.Microsoft Pressing Intel for 16-Core Atom

http://www.tomshardware.com/news/16-core-Atom-SoC-...

marc1000 - Wednesday, March 16, 2011 - link

1 CPU with 16 small-cores that has the same performance as 1 CPU with 4 big-cores?I don't believe the power savings will be THAT great, once you start to put more CPUs on the same die the power needed goes up.

anyway, this defeats the "isolated server" idea.

johnstalberg@yahoo.se - Thursday, March 17, 2011 - link

I think you're missing the point here with th 2 * 16 = 1 * 32, logic?Forget about Intel for a moment and think about where these CPU's come from? The ARM's typically runs our smartphones software and as such it has to be battery savings that has been the holy grail as a target when producing it. Sure a concious target against a dedicated server market could errode some of this but on the other hand it isn't absolutely a must. Low power footprint as an isolated parameter has an ideal value of 0 in any use case but has different importants in the many different tasks.

Computer centers becomes more and more burdened by the power cost and to lower the power usage get more and more important and as such this kind of server chips and ultra mobility chips becomes a match. One could belive you would be heavily constraned by raw physics and get the simple proportionality as you describe but as it is today, the ARM chips has an efficiency advantage and by the same time not beeing to poor to be usable for a lot of tasks.

Had the bigger iron just been bigger, your analysis would be perfectly valid. As it is know you seem to forget the proportional differences in powwr usage.

Now get back to Intel and the Atom and think about why this is the best candidate? Well it is their low power chip but it has also some or a lot of the ARM battery saving mantra it share with it. That can make it do better than any 2 * 16 = 1 * 32, will show. Xeon has not been made with an overall battery savings agenda covering the whole architecture project from day one until production as of now!

ajcarroll - Wednesday, March 16, 2011 - link

L, while I think your points are valid, it's important to note that micro servers are a different form factor to blades. They're not just dropping a low power CPU into a blade, but rather putting it into a half width / half height box, so that you can fit 4x into the same rack. Plus they plan to share power supplies... So while I think you're raised valid points, it really boils down to whether or not they address the issues you raise. If they don't address the issues, then the technology is indeed worthless, if however they do succeed in producing something that is competitive in some applications, in terms of computing power vs. power consumption/physical space, then they will succeed in those markets. My gut feel is that 5 years from now, Microservers will indeed have established themselves as a legit technology that is the best choice for some applications, while utilizing virtualization on more powerful boxes will be the best choice in other situations.